Amazon CEO Andy Jassy is signaling big ambitions for the company’s custom chip efforts, and his latest shareholder comments make one thing clear: Amazon believes its in-house silicon has become a major advantage for AWS—and it may not stay an internal tool forever.

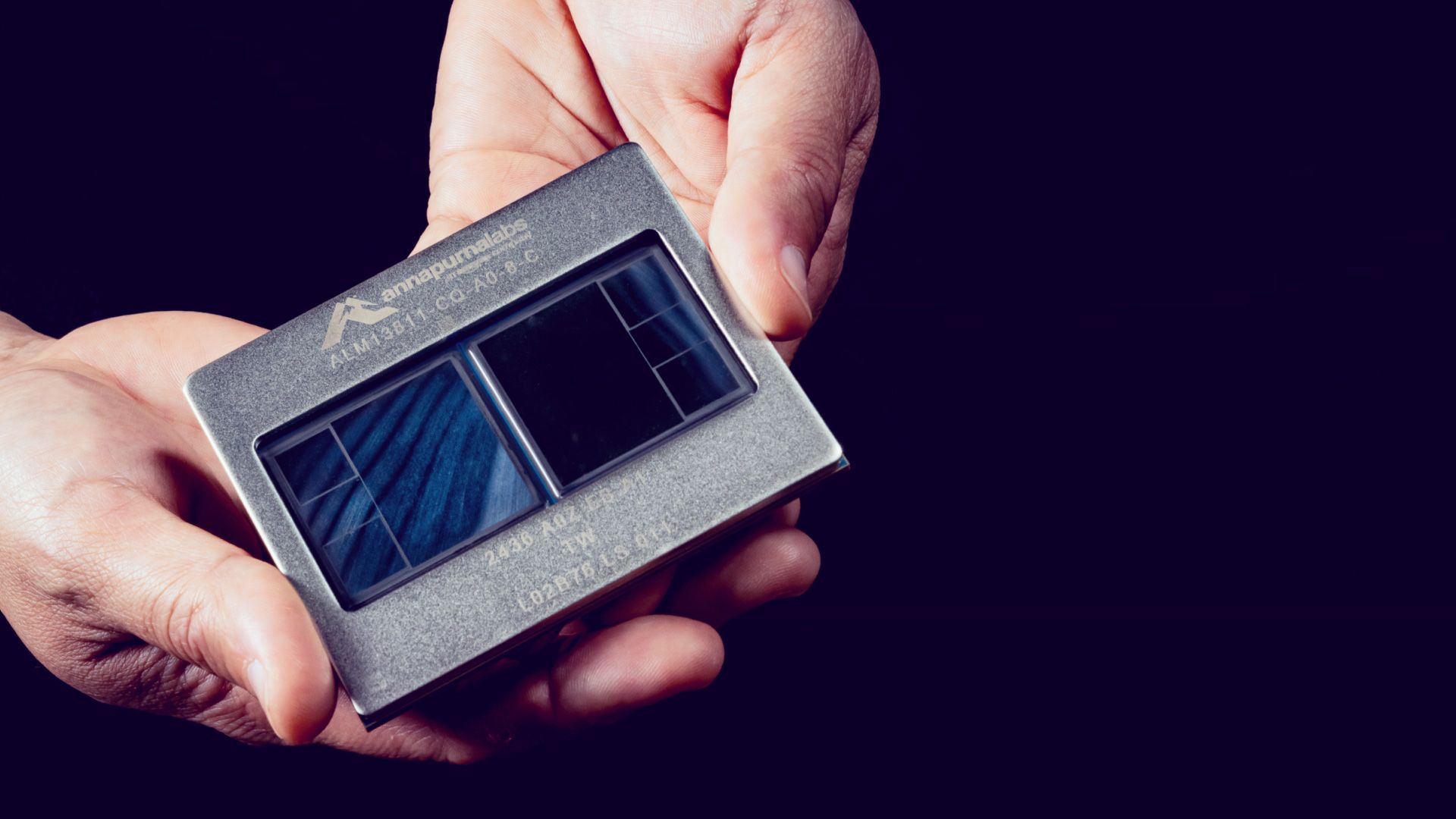

The cloud industry is deep into a “compute crunch,” where demand for AI training, inference, and data center capacity is rising faster than traditional infrastructure can keep up. To close that widening gap, hyperscalers have increasingly turned to custom silicon. Amazon has been one of the most aggressive players here, building out two key chip families: Trainium for AI workloads and Graviton for server CPUs. According to Jassy, these products have matured into a serious platform that is already reshaping AWS from the inside.

In his message to shareholders, Jassy pointed to the scale of Amazon’s chip business in a way that turned heads. He suggested that if Amazon’s custom silicon operation were viewed as its own standalone compute provider, it could be on track for an estimated $50 billion in annual recurring revenue. That’s a striking claim, and it underlines how central these chips have become to AWS’s long-term strategy—especially as AI demand continues to surge.

A major theme in Jassy’s comments is economics. He emphasized that having a highly demanded in-house AI chip creates “many possibilities,” but the most important may be the ability to lower customer costs while improving AWS profitability. At meaningful volume, Amazon expects Trainium to save tens of billions of dollars in capital expenditures each year. Jassy also argued that this approach can deliver several hundred basis points of operating margin advantage compared with relying only on third-party chips for AI inference.

Even while reaffirming that AWS will continue to support and work with external chip providers, Jassy highlighted what many cloud customers are looking for right now: an option that wins on price-performance. He framed Trainium as a strong answer to that demand, suggesting Amazon sees its AI hardware stack as more cost-efficient at scale for many workloads.

Jassy also drew attention to how quickly AWS has been shifting toward Graviton, Amazon’s ARM-based server CPUs. He indicated that after Graviton’s introduction, AWS infrastructure has become heavily dominated by these processors—an implicit jab at legacy CPU market dynamics. He then hinted that a similar transition could be underway in AI, where Trainium’s role expands across both training and inference as adoption grows.

The more interesting implication behind all of this is what Amazon’s strategy says about the broader AI infrastructure market. The goal of custom infrastructure isn’t necessarily to eliminate mainstream options across the board. Instead, it’s a response to a compute shortage so large that even the biggest cloud providers can’t depend solely on the traditional supply path. By controlling more of the stack—from chips to systems—Amazon positions itself to meet demand faster, manage costs more tightly, and reduce exposure to supply constraints.

And Amazon may be thinking beyond its own data centers. Alongside the discussion of chips, the company has also hinted at the possibility of offering its rack-scale infrastructure to third-party customers. If that direction becomes reality, Amazon could move from being a major consumer of AI hardware to a direct seller of integrated AI compute systems, potentially competing head-to-head with established infrastructure providers.

With the company planning to invest “hundreds of billions” in capital expenditures to expand this business over time, the Trainium and Graviton roadmap is shaping up to be a central pillar of Amazon’s AI strategy. If Amazon follows through on external offerings—whether chips, full racks, or managed compute capacity—it could meaningfully change the landscape for AI infrastructure pricing and availability in the years ahead.