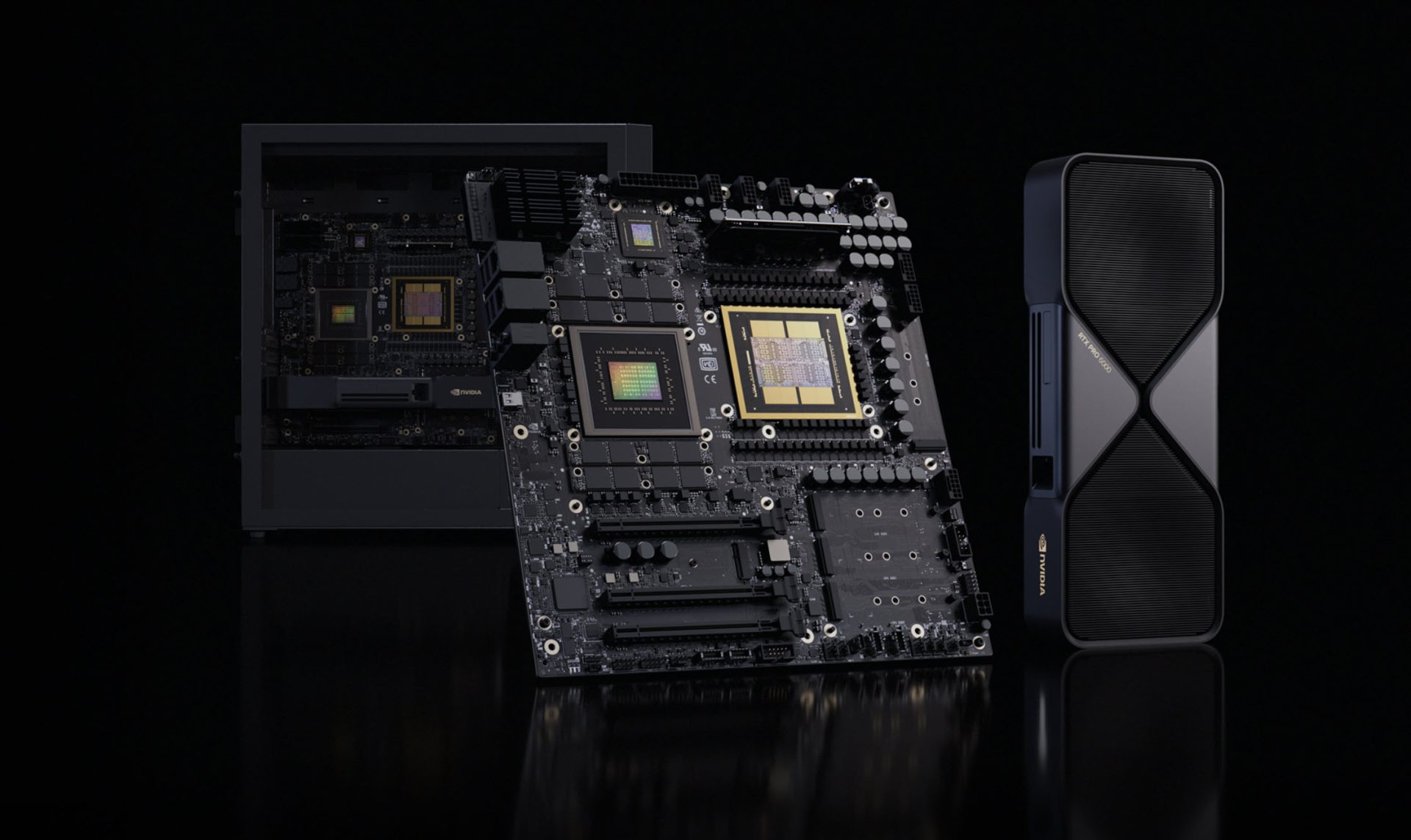

NVIDIA has officially refreshed its DGX Station AI supercomputer lineup, and this time it’s built around the new GB300 Blackwell Ultra “desktop superchip.” Announced at GTC 2026, the updated DGX Station is designed to bring serious data-center-class AI capability into a workstation-style desktop form factor, with multiple configurations expected from NVIDIA’s hardware partners.

At its core, DGX Station is positioned as a powerful AI workstation rather than a traditional consumer desktop. The idea is straightforward: take NVIDIA’s latest Blackwell Ultra platform, integrate it onto a desktop-oriented motherboard, and pair it with workstation-friendly expansion, storage, and networking so developers, researchers, and enterprises can run and iterate on AI workloads locally without relying entirely on remote infrastructure.

The headline upgrade is the GB300 Blackwell Ultra GPU. The GB300 packs 160 SMs, with 128 CUDA cores per SM, which totals 20,480 CUDA cores. It also includes 640 5th-gen Tensor Cores, support for FP8, FP6, and NVFP4 precision modes, plus 40 MB of dedicated Tensor memory (TMEM). NVIDIA’s focus here is clear: accelerate modern AI training and inference with lower-precision formats that preserve accuracy while significantly improving performance and efficiency.

Memory is another major leap. Blackwell Ultra raises HBM3e capacity to 252 GB, up from the previous platform’s maximum of 192 GB. Bandwidth is rated at 7.1 TB/s, aimed at feeding large models more efficiently and reducing bottlenecks during training and inference. NVIDIA also highlights a sizable gain in dense low-precision compute using NVFP4, claiming roughly a 50% uplift in output. NVFP4 is positioned as delivering near-FP8 accuracy, often with differences under 1%, while shrinking the memory footprint by about 1.8x versus FP8 and 3.5x versus FP16—an important advantage when working with larger models or trying to increase batch sizes.

On the CPU side, the system uses a single Grace processor with 72 cores based on the Neoverse V2 architecture. DGX Station also includes 496 GB of LPDDR5X system memory delivering 396 GB/s of bandwidth. Combined with the GPU’s HBM, the platform totals 784 GB of combined memory—an eye-catching figure for a desktop-style AI system built to handle demanding datasets and models.

CPU and GPU communication is handled through NVLink-C2C, providing up to 900 GB/s of interconnect bandwidth. For high-speed connectivity, the system includes a CX8 SuperNIC rated at up to 800 Gb/s networking speeds, which is particularly relevant for enterprise environments, distributed workloads, and fast data movement between systems.

In terms of power and expandability, the new DGX Station is rated for a 1600W TDP and supports NVIDIA’s latest RTX PRO Blackwell graphics cards. Expansion and I/O are very workstation-centric: four M.2 Gen5 slots for high-speed storage, four Ethernet ports (including dual 400 Gb/s connections, plus 10 GbE and 1 GbE), along with PCIe Gen5 expansion via one full x16 slot and two additional Gen5 x16 slots wired at x8 electrically.

NVIDIA says DGX Station systems based on GB300 Blackwell Ultra will be offered not only directly but also through partner-built designs, giving buyers multiple options depending on preferred chassis, cooling design, and configuration approach. While official pricing hasn’t been shared, the specifications strongly suggest these machines will land in the “several thousand dollars” range or higher. Shipping is expected to begin this month.

For anyone searching for a high-end AI workstation, local LLM development box, or an enterprise-grade desktop supercomputer for model training and inference, the DGX Station with GB300 Blackwell Ultra is shaping up to be one of NVIDIA’s biggest workstation AI pushes yet.