AI companies are racing to make large language models feel instant, but response latency has become one of the biggest bottlenecks in modern AI infrastructure. In agent-like AI systems especially, the real competitive edge often comes down to tokens per second (TPS) and how quickly a model can complete a task. While much of the industry is trying to push performance forward with approaches like tighter on-chip memory and SRAM-style optimizations, a young chip startup called Taalas is taking a very different path: stop relying on general-purpose compute and instead build custom ASIC hardware that effectively “hardwires” an AI model into silicon.

Taalas says it has built a platform that can turn an AI model into custom silicon in roughly two months from the time it receives a previously unseen model. The company calls these “Hardcore Models,” and claims the resulting hardware implementation can be dramatically faster, cheaper, and more power-efficient than running the same model as software on conventional accelerators.

The idea rests on two core strategies. First, Taalas focuses on workload specialization at the hardware level, meaning it maps the neural network structure of a specific LLM directly onto the chip to optimize execution for that model. Second, it targets what it describes as “merging storage and computation,” designed to reduce classic memory-wall limitations and cut the overhead caused by moving data around inside general-purpose systems. In other words, instead of constantly shuttling data between memory and compute blocks, the goal is to keep computation dense and communication short—one of the biggest factors behind LLM latency in real-world deployments.

Taalas also positions its approach as a simpler path from a system-design perspective. Rather than leaning on advanced cooling techniques, high-bandwidth memory stacks, or complex packaging and integration methods, the company says the main innovation is in the silicon engineering itself. It claims computation happens at “DRAM-level” density to boost intercommunication speed, which it credits as a key reason its architecture reduces latency for LLM inference.

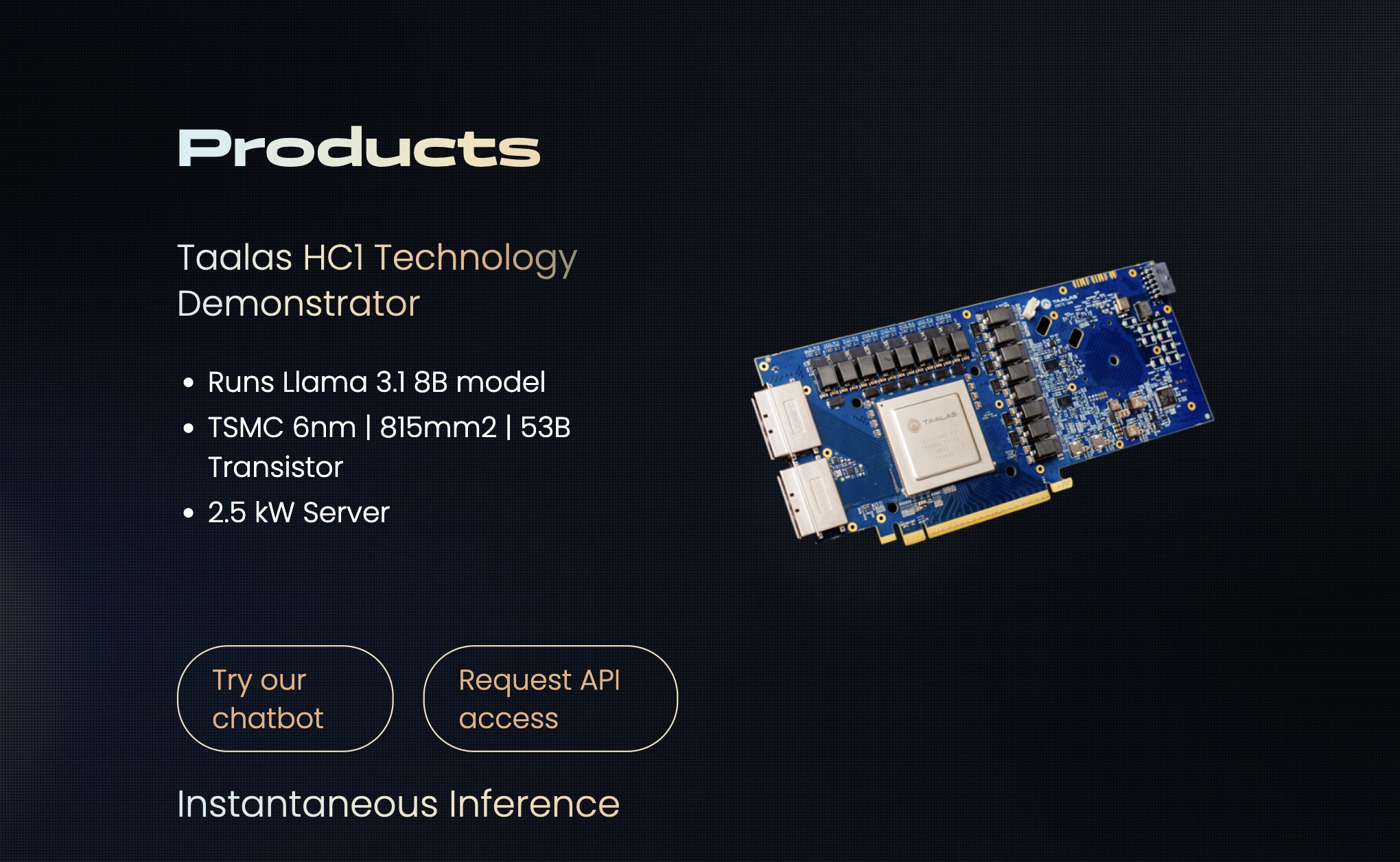

The company’s first showcased product is HC1, built around Meta’s Llama 3.1 8B model. Taalas claims HC1 can deliver around 10x the tokens-per-second performance of today’s high-end infrastructure while also cutting production costs by about 20x. If those numbers hold up across broader testing and deployments, it’s an attention-grabbing pitch for anyone trying to deploy fast, cost-efficient inference at scale.

But the technical details also highlight the tradeoffs. HC1 is reportedly built on TSMC’s 6nm process and can reach a die size of up to 815 mm²—roughly in the same ballpark as very large data center chips. And while hosting an eight-billion-parameter LLM is meaningful, the largest frontier models now run up to the trillion-parameter range, which raises an obvious scaling challenge. A per-model, hardwired approach may need major rethinking as model sizes and architectures evolve.

To address scaling, Taalas points toward clustering. The company says it has already used a multi-chip setup with DeepSeek’s R1, achieving a reported 12,000 TPS per user in a 30-chip configuration. That suggests the roadmap isn’t limited to single-chip deployments, but instead could mirror how modern AI acceleration scales out—just with model-specific silicon nodes as the building blocks.

The bigger question may be market fit and adoption. Hardwiring an LLM into silicon implies less flexibility: these chips would be tailored to specific models, with no simple way to swap weights or rapidly pivot to a new architecture without spinning new hardware. Still, if Taalas can consistently deliver major latency improvements and cost reductions, some parts of the AI market may view that tradeoff as worthwhile—especially for high-volume, stable inference workloads where speed and cost matter more than frequent model changes.

If the startup can prove its performance claims at scale and build a viable business model around model-specific ASICs, it could offer an alternative route to faster LLM responses at lower cost—one that prioritizes specialization over generality in the race for real-time AI.