NVIDIA is booking record volumes of TSMC’s CoWoS advanced packaging as demand for AI chips surges, with current Blackwell products and the next‑gen Rubin lineup driving the ramp, according to new estimates from UBS.

The AI buildout shows no signs of slowing, and NVIDIA is moving early to secure its supply chain. UBS now expects the company to require about 678,000 CoWoS wafers in 2026, roughly a 40% jump from this year. The growth is led by Blackwell and Blackwell Ultra, which UBS sees delivering around 30% quarter‑over‑quarter shipment growth, followed by a volume ramp for Rubin.

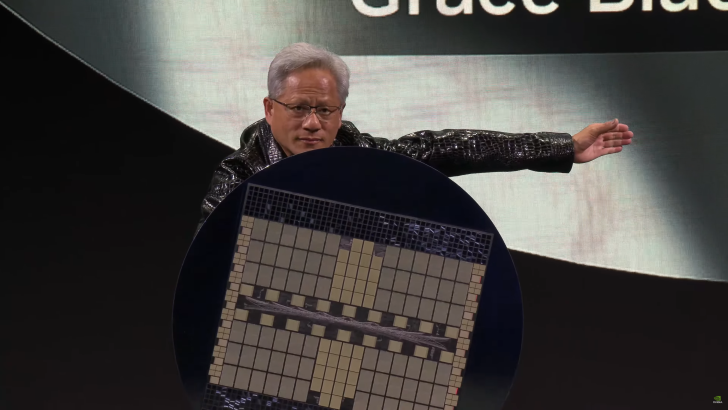

CoWoS, short for Chip-on-Wafer-on-Substrate, is the advanced packaging tech that lets NVIDIA combine massive GPUs with HBM memory at extreme bandwidths. It’s become a critical bottleneck for the AI era, and the latest forecast suggests that crunch will intensify. UBS notes that TSMC has little time to add capacity, as orders from top cloud and enterprise customers continue to swell.

Beyond training‑class GPUs, NVIDIA’s newly announced Rubin CPX platform is expected to add even more CoWoS demand. Rubin CPX is designed for inference, and it uses CoWoS‑L packaging, further loading TSMC’s advanced packaging lines. Taken together, UBS projects NVIDIA’s total GPU production could climb to about 7.4 million units in 2026, a healthy year‑over‑year increase.

Despite export limits affecting certain regions, NVIDIA’s order book is expanding across a broad mix of customers. Blackwell Ultra rack‑scale systems are today’s mainstream offerings, and Rubin is slated to debut as early as 2026, setting the stage for another leap in compute for hyperscale data centers.

The takeaway is clear: AI infrastructure spending is accelerating, not cooling. With NVIDIA locking in more CoWoS capacity and preparing Rubin for volume, and with TSMC’s advanced packaging lines running flat out, the industry is bracing for another wave of high‑performance AI silicon rolling into the world’s largest data centers.