NVIDIA is pulling back the curtain on Rubin CPX, a new class of GPU designed specifically for massive-context AI. Built for workloads like million-token code understanding and long-form generative video, Rubin CPX promises a major leap in speed, efficiency, and scalability across next‑generation data centers.

At the heart of the announcement is the NVIDIA Vera Rubin NVL144 CPX platform, which pairs Rubin CPX GPUs with next‑gen NVIDIA Vera CPUs and Rubin GPUs inside an MGX-based system. In a single rack, this platform targets up to 8 exaflops of NVFP4 AI compute, delivering about 7.5x the AI performance of GB300 NVL72 systems. It also brings 1.7 PB/s of memory bandwidth and a vast pool of fast memory. Configurations highlight up to 100 TB in a rack, with options cited as high as 150 TB of GDDR7—representing up to 4x more memory than the prior generation and 3x higher Attention performance for long-context models. For customers with existing Vera Rubin 144 systems, a standalone Rubin CPX compute tray will be available to simplify upgrades.

Rubin CPX is purpose-built for long-context inference. Large language models working across entire codebases or hour‑long videos can exceed a million tokens of context, a scale that pushes traditional GPU architectures to their limits. Rubin CPX integrates long-context processing with on‑chip video encode and decode engines, enabling advanced workloads like video search and high-quality generative video without juggling data across multiple accelerators. The result is faster throughput, lower latency, and better economics for massive-context AI.

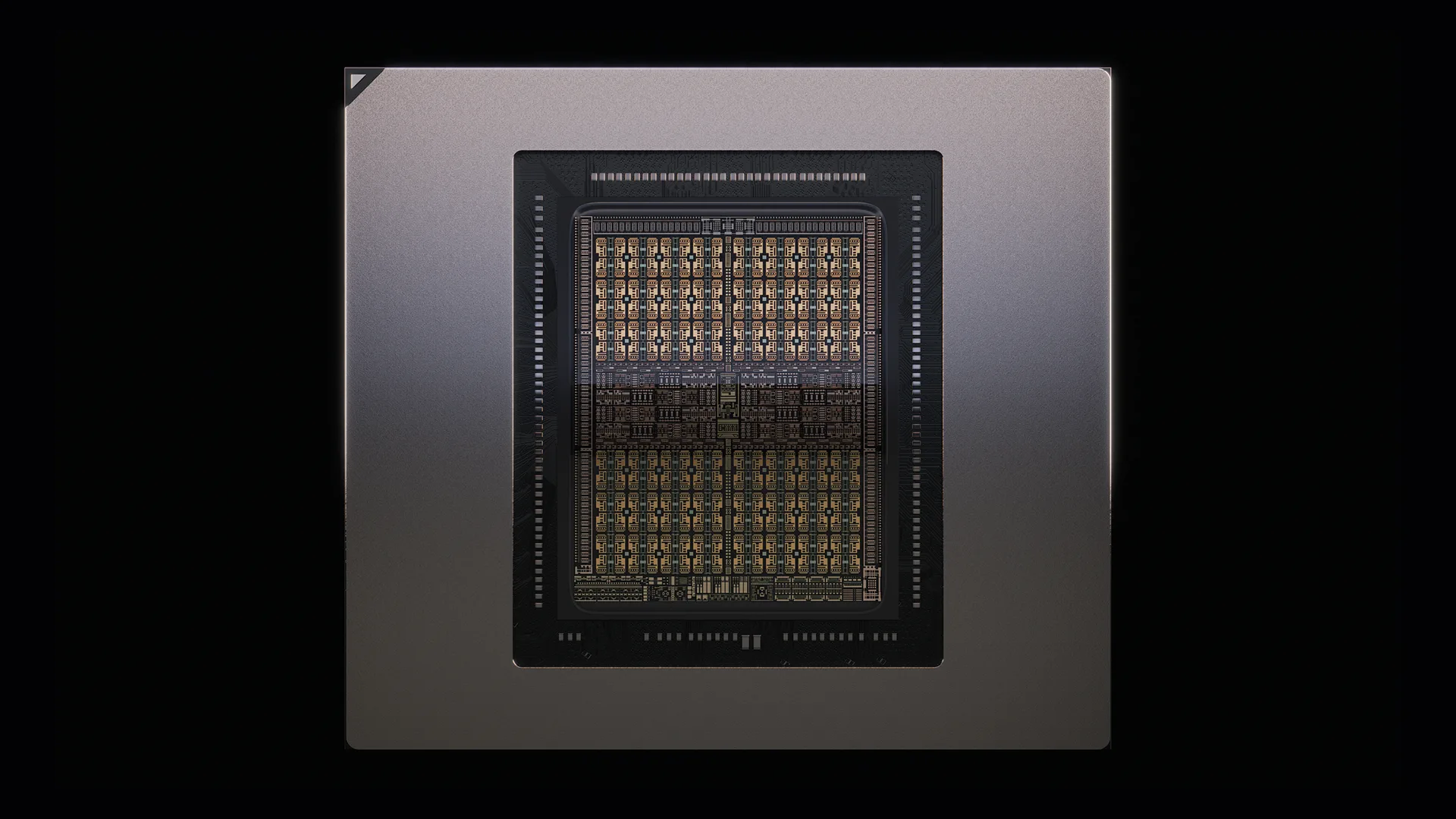

Under the hood, Rubin CPX is based on the NVIDIA Rubin architecture and uses a cost‑efficient, monolithic die packed with NVFP4 compute tailored for AI inference. Each GPU targets around 30 PFLOPs of NVFP4 compute and supports up to 128 GB of GDDR7. NVIDIA’s choice of GDDR7 over HBM for Rubin CPX emphasizes cost efficiency and broader scalability while still delivering the bandwidth needed for long-context operations. Video capabilities have also been expanded with four times the hardware encode/decode engines to accelerate generative media and multimodal AI.

The wider Rubin platform introduces complementary technologies to remove bottlenecks at scale, including Vera CPUs and ConnectX‑9 SuperNICs for high-throughput networking and orchestration across MGX systems. The combined stack aims to maximize token throughput and revenue for enterprises deploying massive-context models, turning coding assistants into systems that can truly understand, refactor, and optimize large-scale software projects.

Key platform highlights

– New Rubin CPX GPU for massive-context AI, coding, and generative video

– Integrated into the Vera Rubin NVL144 CPX MGX platform with up to 8 exaflops of NVFP4 compute

– Up to 7.5x AI performance versus GB300 NVL72 systems

– 1.7 petabytes per second of memory bandwidth

– Large memory pools, with configurations noted up to 100 TB and options up to 150 TB of GDDR7

– 3x higher Attention performance for long-context models

– Per‑GPU targets: about 30 PFLOPs NVFP4 and up to 128 GB GDDR7

– Fourfold increase in on-chip video encode/decode engines

– Optional Rubin CPX compute tray for upgrading existing Vera Rubin 144 deployments

Availability is slated for the end of 2026 for the first Rubin CPX systems. Vera Rubin is expected to enter production soon, with a broader platform launch planned around GTC 2026. For teams building long-context AI—from million-token code intelligence to generative video—Rubin CPX signals a major step toward faster, more efficient inference at data center scale.