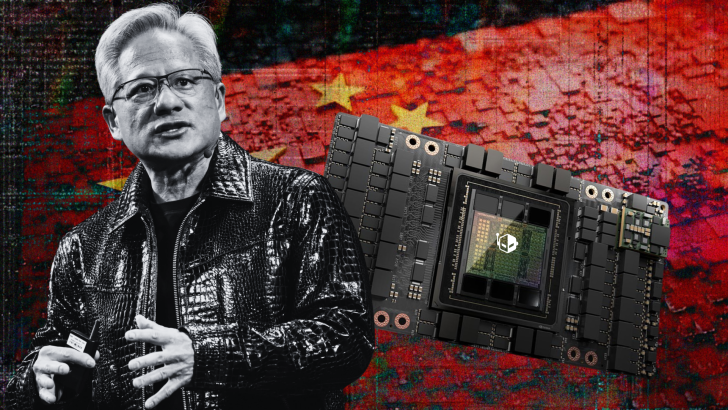

NVIDIA is reportedly preparing to make its H200 AI chips for China far more appealing by leaning on a strategy that usually works in any market: aggressive pricing. A new report citing Chinese sources suggests the company plans to keep the China-bound H200 only slightly more expensive than the H20, aiming to remove doubts about demand and position the H200 as a “too good to pass up” upgrade for local AI buyers.

The uncertainty around H200 demand in China has been understandable. Even after U.S. policy changes opened the door for exports, many in the industry questioned whether Chinese firms would prioritize imported chips while accelerating efforts to build a domestic AI hardware and software stack. There was also skepticism because the H200 is based on NVIDIA’s Hopper architecture, which some see as an older generation compared to newer offerings elsewhere. Despite those concerns, the latest reporting indicates NVIDIA expects strong interest and intends to make price the deciding factor.

According to Chinese media reports referenced by analyst Jukan, an 8-chip H200 cluster could be priced around $200,000—roughly in line with what comparable H20 configurations have been selling for. If accurate, that would be a notable move because the H200’s hardware capabilities are a meaningful step up from the H20, particularly in memory capacity and bandwidth, and in broader suitability beyond inference workloads.

On paper, the H200 brings several major advantages. It uses HBM3E memory rather than HBM3, increases memory capacity from roughly 96GB to 141GB (about a 47% jump), and boosts memory bandwidth from around 4.0 TB/s to 4.8 TB/s (roughly 20% higher). It also supports configurations beyond PCIe, including SXM form factors, and offers full NVLink support—important for multi-GPU scaling in large training and high-performance computing environments. The H20, by contrast, is an export-limited variant designed primarily for inference and typically comes with tighter limitations at the system level.

Some estimates in the report suggest performance gains could be dramatic in certain scenarios, even claiming improvements of more than six times—although real-world results will depend on the workload, software stack, system design, and the specific constraints of any China-compliant configurations. Still, even a smaller uplift would be substantial if pricing remains close to H20 levels.

Timing is also a key part of the story. The report claims NVIDIA could ship an initial batch of H200 chips to China by mid-February, assuming U.S. regulatory approval proceeds as expected. Separately, another report indicates that major Chinese tech companies—including Alibaba, Tencent, and ByteDance—are preparing for a major infrastructure buildout now that access to stronger compliant accelerators may be improving. The spending figure being discussed is significant: up to $31 billion in AI infrastructure investment, largely centered on compliant hardware from NVIDIA and AMD.

The bigger takeaway is that demand fundamentals in China haven’t changed as much as some expected. Training and operating large-scale AI systems still requires enormous compute density and mature software support, and NVIDIA remains the most established supplier in both hardware performance and developer ecosystem. While domestic alternatives are advancing, they continue to face obstacles such as manufacturing capacity constraints and less developed software platforms. For Chinese cloud providers, hyperscalers, and AI labs trying to stay competitive, access to capable accelerators still matters—and price-performance can quickly outweigh concerns about whether a chip generation is “new enough.”

If NVIDIA can truly deliver H200-class capability at only a modest premium over the H20, it could reshape purchasing decisions across China’s AI sector and trigger a new wave of data center upgrades focused on compliant, high-throughput GPU clusters.