Security researchers investigating the Halo smart smoke detector have uncovered a troubling mix of weak protections and real-world privacy risks, raising fresh questions about how far “safety tech” can go before it becomes a surveillance tool.

According to the researchers, the device’s security was so underdeveloped that it bordered on manufacturer negligence. One of the biggest red flags was the absence of secure boot, a foundational safeguard that helps prevent unauthorized software from running at startup. Without it, the researchers were able to dump the contents of the device’s internal compute module and begin reverse-engineering how it works and communicates.

From there, they escalated access through the detector’s hosted web interface. The team says they were able to brute-force their way into admin privileges because the system lacked serious authentication protections. In other words, the barrier to gaining high-level control wasn’t a sophisticated exploit—it was the kind of weak security posture that attackers routinely look for in connected devices.

Even more concerning was the firmware update process. The researchers report that the Halo would accept virtually any firmware payload, as long as the update file was named correctly. That means an attacker could potentially install modified firmware and permanently change what the device does. To make matters worse, the firmware files were reportedly available as free downloads on the manufacturer’s website, making it even easier for outsiders to analyze and potentially weaponize the update mechanism.

By the end of their assessment, the researchers concluded they could alter the Halo to do essentially anything they wanted. While they didn’t find evidence that the device’s embedded microphones were being used for anything beyond the manufacturer’s stated purpose, the larger issue is capability and control. If security gaps allow a device to be repurposed, the difference between “what it’s designed to do” and “what it can be made to do” becomes a serious privacy concern.

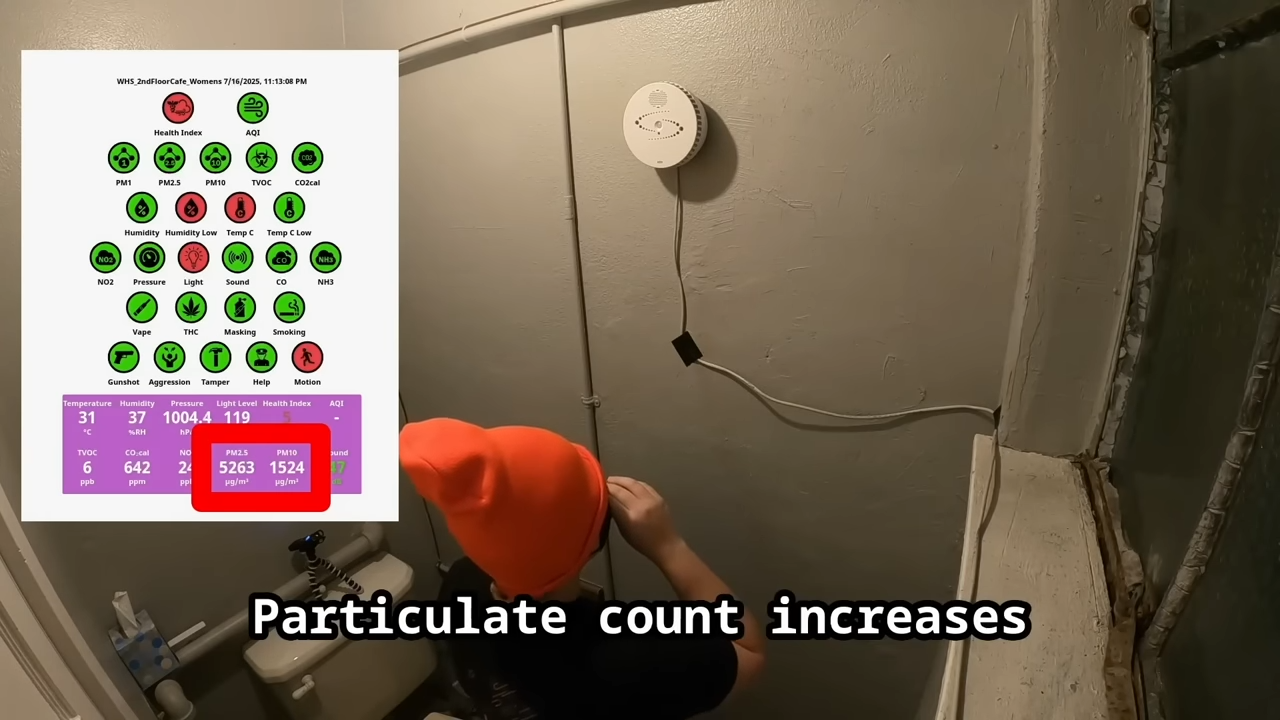

That concern grows when you consider where these detectors are already deployed. Reports indicate the Halo is present in places like retirement homes, schools, banks, and public housing projects—environments where people may have limited choice or awareness about what technology is installed around them. Adding to the unease, one public official reportedly described the device as an “expert witness” in prosecutions, intensifying fears that the technology could be used in ways that go beyond fire safety and into monitoring and enforcement.

The bigger picture is what this suggests about the expanding Internet of Things footprint in public and semi-public spaces. A network of always-present connected devices—especially ones with microphones—can quickly become a hidden infrastructure that invites misuse. And when security is weak, the threats multiply: not only could hackers gain control, but the same openings could also make it easier for powerful parties to leverage the device in ways residents, students, and the general public never agreed to.

As smart building safety systems become more common, this case highlights why basic protections like secure boot, strong authentication, and cryptographic firmware verification aren’t optional “nice-to-haves.” They are the minimum requirements for ensuring that devices intended to protect people can’t be quietly turned into tools for intrusion.