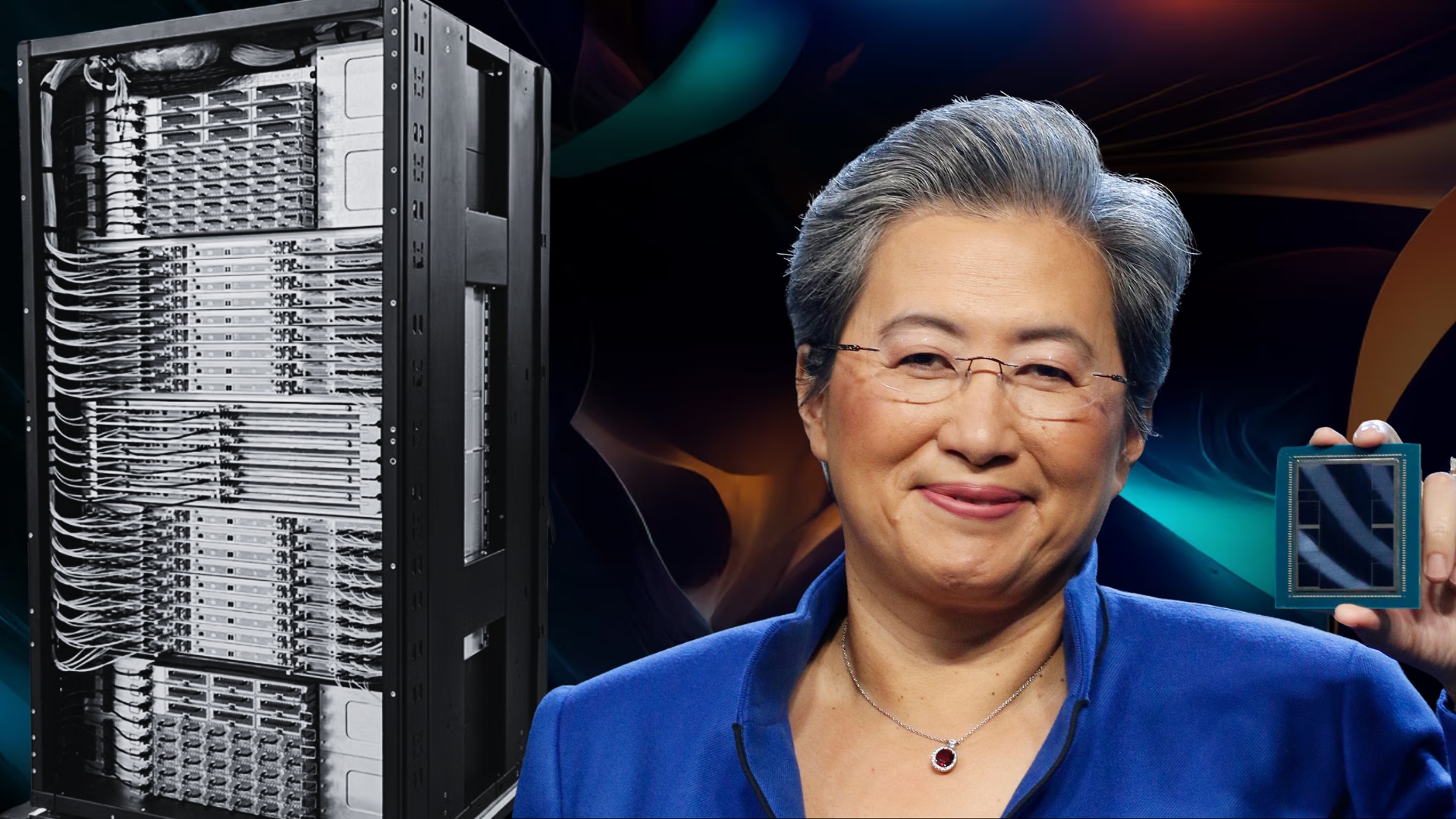

AMD is betting big on a surge in server CPU demand driven by the rapid rise of AI, especially a newer wave known as agentic AI. Speaking during the company’s fourth-quarter earnings call, AMD CEO Dr. Lisa Su laid out how AI is reshaping cloud computing needs, why CPUs are becoming more important alongside accelerators, and how this shift could change the balance of compute inside modern data centers.

Su said AI was the main growth engine for AMD during the quarter, noting that every major cloud provider expanded deployments of AMD EPYC server processors. These expanded rollouts are designed to handle a wide mix of AI-related tasks, from general-purpose compute and data processing to roles that support large accelerator deployments and newer agentic applications.

A key part of that infrastructure is what data centers often call “head node” functionality. In simple terms, even when GPUs and other accelerators do the heavy lifting for training and running large models, CPUs still play an essential management role—coordinating workloads, directing data movement, and keeping large compute clusters operating efficiently.

Agentic AI, in particular, is increasing the need for more CPU horsepower. Unlike traditional AI workloads that may mostly rely on accelerators once set up, agentic systems can generate many parallel tasks that must be orchestrated and executed across the system. Su explained that these workloads demand additional CPU processing for orchestration, data movement, and parallel execution—on top of CPUs serving as the host layer for GPUs and other accelerators. The result, she said, is stronger near-term CPU demand and deeper long-term planning conversations with customers around capacity.

That growing demand has led AMD to significantly raise its expectations for the server CPU market. Su pointed to a much steeper growth trajectory than previously outlined, with the server CPU total addressable market now projected to expand at roughly 35% per year to reach $120 billion by 2030. That’s a major jump from AMD’s earlier outlook, which suggested approximately 18% annual growth over the next three to five years.

One of the most important questions for investors and the industry is whether higher CPU demand will reduce spending on GPUs—or whether it will expand overall data center AI spending. Su’s view is clear: CPU growth is largely additive to the GPU market, not a replacement. In her assessment, accelerators remain essential for foundational models, while agent-based systems “spawn” many CPU-centric tasks that expand the need for server processors rather than shrinking it.

The conversation also highlighted a shift in the CPU-to-GPU ratio inside AI clusters. Historically, GPUs were often deployed with fewer CPUs per accelerator, since the CPU was mainly acting as a host in configurations like one CPU for every four or even eight GPUs. Su suggested that agentic AI could push that ratio closer to one-to-one—and in some scenarios, possibly even beyond that if agent-based workloads scale dramatically and require many CPU resources operating in parallel.

While the long-term endpoint is hard to predict, Su emphasized that the industry’s mindset is already changing. Data center planners are now thinking about CPUs and accelerators together as part of a unified AI deployment strategy, which she described as a positive development for AMD and for the broader server CPU market.

In short, AMD sees agentic AI as a meaningful catalyst that strengthens the role of server CPUs in AI infrastructure—supporting accelerators rather than competing with them—and potentially driving a new era of growth in cloud and data center compute.