New graphics cards are being tuned harder than ever for AI workloads, but a recent real-world test shows something unexpected: an 8-year-old NVIDIA Tesla V100 can still beat newer consumer GPUs in popular large language model (LLM) tasks—often with better efficiency too. And because used V100 cards have dropped dramatically in price, it raises an interesting question for anyone building a budget AI box: could yesterday’s data center hardware be today’s best value for running LLMs locally?

The Tesla V100 comes from NVIDIA’s Volta era, the company’s first truly data-center-first GPU family that didn’t target mainstream gaming PCs. Volta also introduced Tensor Cores, specialized hardware designed specifically to accelerate AI math. Tensor Cores have improved a lot since then, but this test set out to see how the original Volta approach holds up with modern LLM inference.

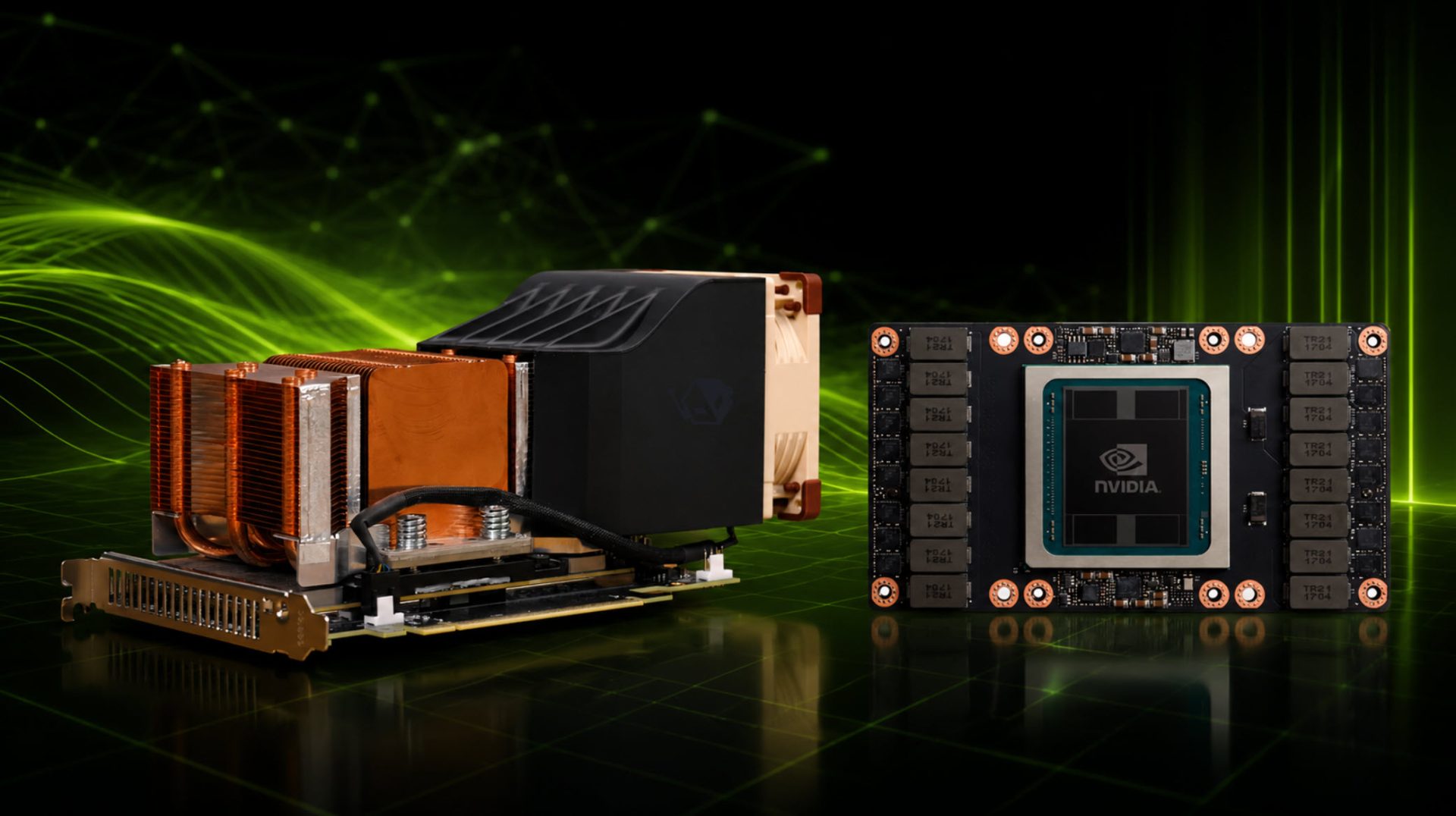

The particular card tested was a Tesla V100 SXM2 module rather than the more familiar PCIe add-in card. On paper, the specs are still impressive: 5120 CUDA cores, 640 Tensor Cores, 6 MB of L2 cache, boost clocks up to around 1530 MHz, and either 16 GB or 32 GB of HBM2 memory on a massive 4096-bit bus. That memory setup delivers roughly 898 GB/s of bandwidth—one of the reasons these older accelerators can still feel “fast” in AI tasks that lean heavily on memory throughput. Power is rated at 250W, which looks modest compared with today’s extreme AI-focused hardware.

What really changes the conversation is the price. The V100 originally sold for well over $10,000, but the 16 GB version can now show up on the used market for roughly $100. At first glance, that seems like an unbeatable bargain for AI experimentation—until you run into the biggest catch: SXM2 compatibility.

SXM2 modules aren’t designed to drop into a normal desktop PC. To make it work, the test setup required an SXM-to-PCIe adapter along with additional power connections and fan headers. Cooling is another hurdle. Tesla cards are typically built for server environments with high-pressure airflow and often rely on passive heatsinks rather than consumer-style fans. In a standard PC case, that means you’ll need a custom cooling approach. In this build, that involved a custom duct and a single high-quality fan focused directly on the heatsink to keep temperatures under control.

Even with the adapter and cooling add-ons, the total spend landed a bit over $200—still cheaper than the mainstream GPUs used for comparison, including an RTX 3060 12 GB and an RX 7800 XT 16 GB.

Performance results were where things got interesting. In testing with a GPT-style model around the 20 billion parameter range, the V100 produced about 130 tokens per second, while the RX 7800 XT came in closer to 90 tokens per second. In another LLM scenario, the V100 also outpaced the RTX 3060 by a large margin, delivering roughly a 42% boost in token generation speed.

Efficiency was just as surprising. Despite drawing more power in some runs, the older V100 still ended up about 12% ahead of the RTX 3060 in overall efficiency for token generation. When capped to a 100W power limit, the V100 again pulled ahead—showing about a 41% advantage in tokens per second per watt compared to the RTX 3060 in that constrained test.

The takeaway is clear: older data center GPUs can still be very capable for local AI and LLM inference, sometimes outperforming newer consumer cards in both speed and efficiency. But it’s not a plug-and-play upgrade. You’re trading simplicity for value, because you may need adapters, extra power routing, and a custom cooling solution to make server hardware behave inside a desktop PC.

For users who want more headroom for larger models, the 32 GB V100 variant typically costs far more—often in the $400 to $500 range—but the extra VRAM can be a major advantage when loading bigger LLMs or using higher-context settings.

If you’re comfortable with a bit of hardware tinkering, a used Tesla V100 can be a surprisingly strong budget option for AI workloads in 2026. If you want something easy and standard, modern consumer GPUs will still be the smoother path—just not always the best performance-per-dollar for LLM inference.