Researchers at the University of Toronto have showcased a novel attack that can significantly degrade the accuracy of AI models on high-end GPUs. By employing a technique similar to RowHammer, their method, called GPUHammer, manipulates single-bit flips within DRAM banks, drastically reducing GPU accuracy to below 1%.

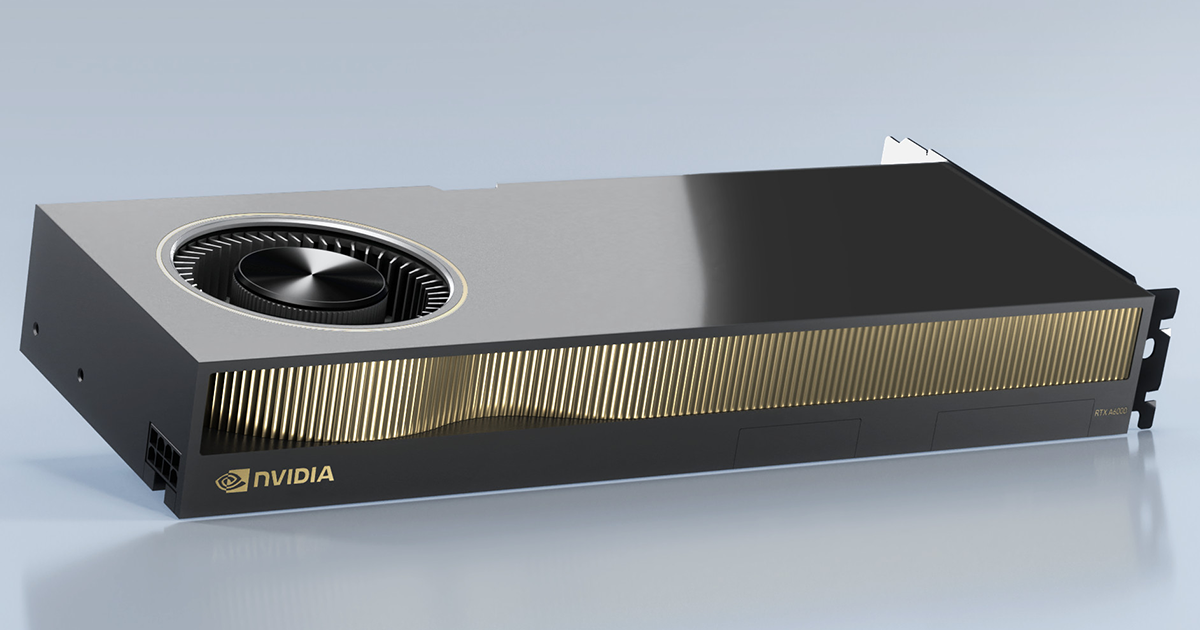

This vulnerability was demonstrated on the NVIDIA RTX A6000, equipped with GDDR6 VRAM. The researchers revealed that by inducing these bit flips, they could significantly impair the GPU’s performance in AI tasks, even with existing defenses like the DRAM-target refresh rate (TRR). A single bit flip in the FP16 value was sufficient to drop the DNN prediction accuracy from 80% to a mere 0.1% across significant ImageNet models.

GPUHammer operates in three key stages: Reverse-Engineering DRAM Bank Mappings, Maximizing Hammering Efficiency, and Synchronizing with DRAM Refresh Cycles. These steps enabled the researchers to trigger bit flips effectively, demonstrating the vulnerability of the GDDR6 memory in the RTX A6000. Interestingly, other GPUs with GDDR6 memory, such as the RTX 3080, did not exhibit the same results, likely due to differences in memory chips from various vendors.

The attack did not affect GPUs like the RTX 5090 or data center models such as the A100 and H100, which utilize High Bandwidth Memory (HBM). For those using the RTX A6000, the issue can be mitigated by enabling ECC (Error-Correcting Code), which handles single-bit flip detection and correction. However, activating ECC may slow performance by up to 10% in ML inference tasks and reduce usable VRAM capacity by 6.25%.

NVIDIA has responded with a security notice advising users to enable system-level ECC on affected GPUs. Fortunately, many modern GPUs, including those from the Hopper and Blackwell series, come with ECC enabled by default, providing better protection against such vulnerabilities.