NVIDIA and AMD are dialing up the pressure in the race to build the best AI accelerators, with both companies reportedly revising their next‑generation designs to outmaneuver each other on performance, memory bandwidth, and power budgets.

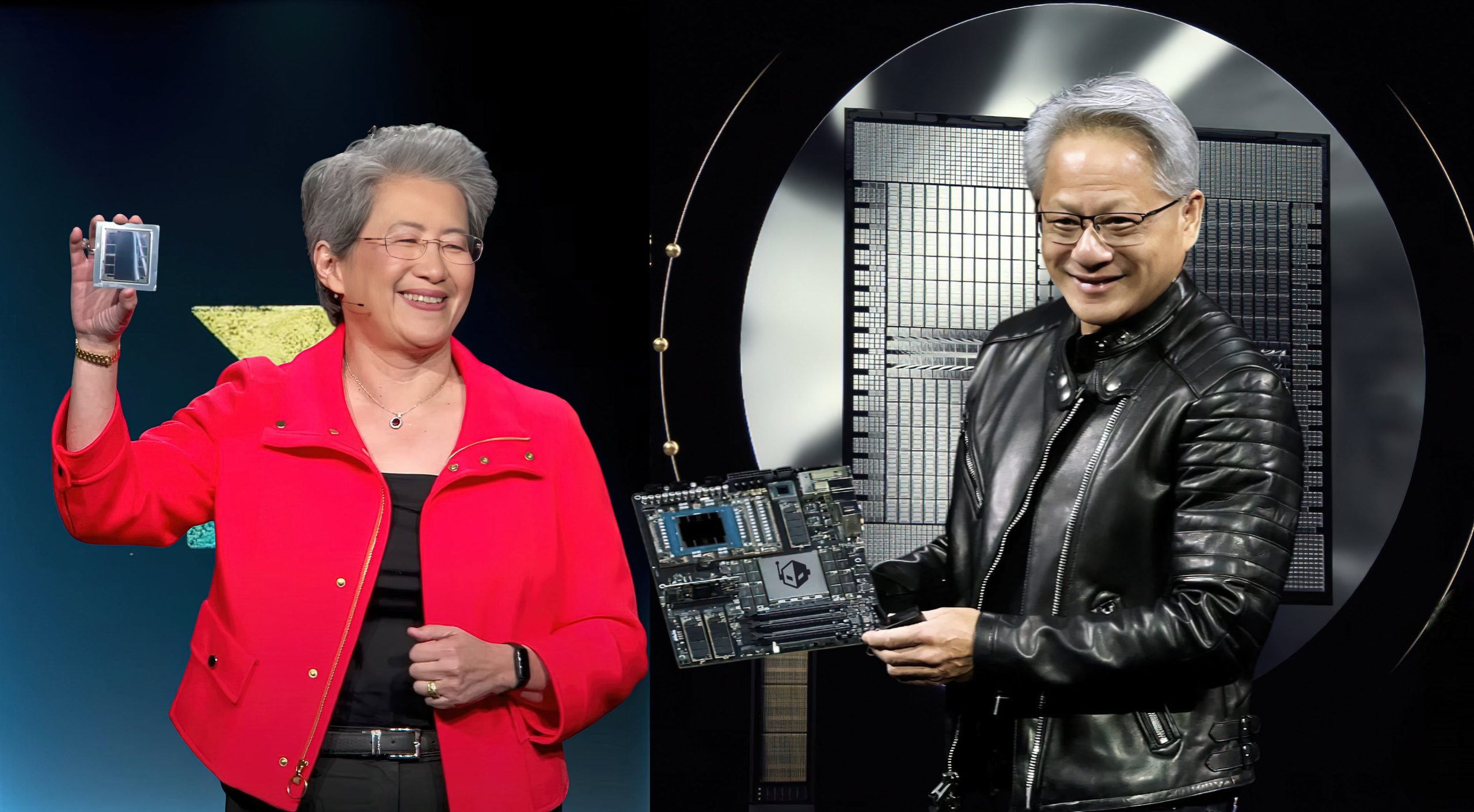

Industry chatter, including recent analysis from SemiAnalysis, points to an unusually intense showdown between AMD’s Instinct MI450 family and NVIDIA’s Vera Rubin (VR200) platform. Executives at AMD have publicly signaled strong confidence: Forrest Norrod framed MI450 as the company’s “Milan moment,” referencing the leap EPYC 7003 brought to AMD’s server CPU fortunes. The message is clear—AMD expects MI450 to be a credible alternative to NVIDIA’s offering in the next cycle.

Behind the scenes, both roadmaps appear to have shifted. To stay competitive, AMD is said to have raised the MI450X total graphics power from an earlier 2300W target to around 2500W. NVIDIA, in turn, reportedly bumped Vera Rubin from about 1800W to roughly 2300W. Memory bandwidth targets have climbed as well, with Rubin now rumored to aim for up to 20 TB/s per GPU, up from an earlier 13 TB/s estimate. These are substantial changes that underscore how fluid and fiercely competitive the high‑end AI accelerator market has become.

What’s fueling this escalation is a convergence of cutting‑edge technologies. Both camps are expected to leverage HBM4 memory, advanced packaging, and TSMC’s N3P process, along with increasingly modular, chiplet‑style designs. That overlap shrinks the technology gap and shifts the battleground to execution, efficiency, and ecosystem readiness.

Rumored specs at a glance

– AMD Instinct MI450 (MI400 family)

– Target launch window: 2026

– Memory: HBM4, up to 432 GB per GPU

– Memory bandwidth: approximately 19.6 TB/s per GPU

– Dense compute (FP4): around 40 PFLOPS

– Power: MI450X reportedly targeting about 2500W TGP after revisions

– NVIDIA Vera Rubin VR200 (R200)

– Target launch window: 2H 2026 for the NVL144 platform

– Memory: HBM4, roughly 288 GB per GPU

– Memory bandwidth: around 20 TB/s per GPU

– Dense compute (FP4): about 50 PFLOPS

– Power: reportedly revised to about 2300W TGP

If these numbers hold, the next cycle could be the closest head‑to‑head in years. NVIDIA’s Rubin appears to chase even higher FP4 throughput and bandwidth, while AMD’s MI450 counters with larger per‑GPU HBM capacity and a more aggressive power envelope. Both strategies aim at large‑scale training and high‑throughput inference for generative AI and other foundation models where memory bandwidth, interconnect efficiency, and software maturity all matter.

There are practical implications. Pushing TGPs into the multi‑kilowatt range will demand denser liquid‑cooling deployments, upgraded power delivery, and careful data center planning. HBM4 supply and packaging yields may become a gating factor for volume. And while hardware leadership is critical, end‑to‑end platform readiness—compilers, frameworks, libraries, and developer tooling—will continue to influence real‑world performance and adoption.

Official, final specifications are still under wraps, but early signals suggest a generational leap in compute and memory performance from both vendors. AMD’s tone indicates confidence that MI450 will see strong uptake, while NVIDIA’s Rubin is already drawing interest from major AI players, signaling that deployments could ramp quickly once platforms are ready.

Bottom line: the AI accelerator arms race is intensifying. With HBM4, advanced nodes, and aggressive power and bandwidth targets, AMD Instinct MI450 and NVIDIA Vera Rubin are shaping up to deliver unprecedented performance for next‑gen AI workloads. For buyers, the next 12–18 months will be about tracking firmed‑up specs, thermal and power requirements, and ecosystem maturity to match the right platform with the right workload at scale.