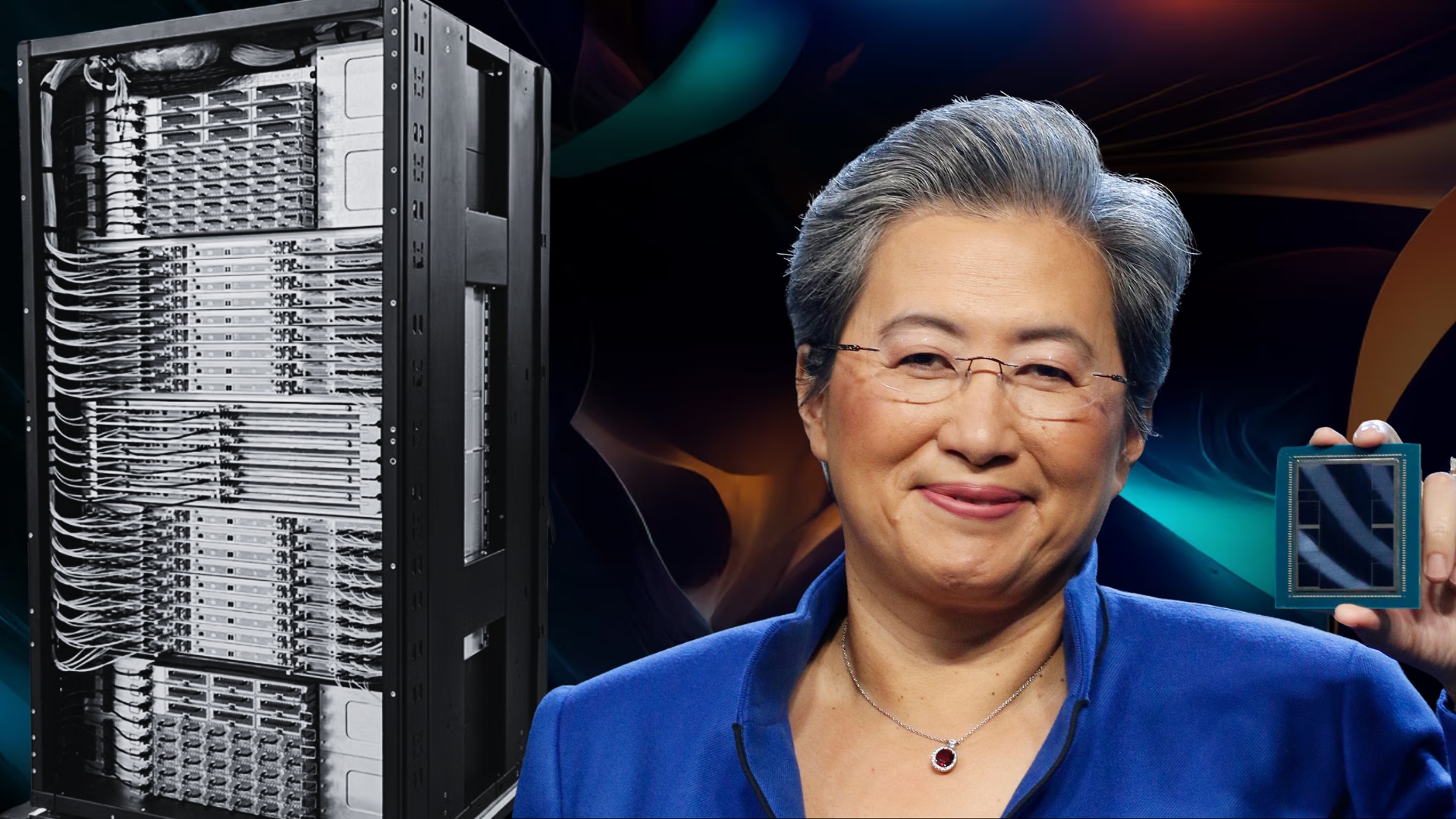

AMD is turning up the heat in the AI accelerator race as it begins customer sampling of its Instinct MI450 GPUs, a key step that often signals real-world deployments aren’t far behind. According to AMD CEO Dr. Lisa Su during the company’s Q1 2026 earnings call, MI450 sampling is already underway with “lead” customers, and the company remains on schedule to ramp Helios rack production shipments in the second half of 2026.

What’s driving the buzz is not just that the hardware is nearing rollout, but that demand is reportedly building faster than AMD expected. Su said customer forecasts for MI450 now exceed AMD’s initial plans, with a growing list of new engagements focused on large-scale deployments, including additional multi-gigawatt opportunities. In other words, the MI450 isn’t simply attracting interest—it’s lining up serious capacity commitments.

Major AI organizations are already tied to upcoming MI450 deployments. OpenAI and Meta have signed multi-gigawatt agreements, and Anthropic is widely expected to use the MI400 family to help power its AI compute infrastructure. AMD also emphasized that it’s working closely with customers through “deep co-engineering,” which typically means hardware, software, and systems are being tuned together to hit performance and efficiency targets at data center scale.

A notable theme from AMD’s comments is where the biggest near-term volume is expected to land: AI inference. While MI450 is being positioned for both AI training and inference, Su indicated that the largest deployments are expected on the inference side as “agentic AI” workloads continue to expand. That matters because inference demand tends to grow rapidly once AI services move from development into production, where latency, cost-per-query, and throughput become everything.

On the technology front, the MI450 lineup is expected to build on AMD’s CDNA 5 architecture and brings several upgrades that speak directly to modern AI infrastructure needs. The highlights include increased HBM4 memory capacity and bandwidth, expanded AI data formats with higher throughput, and standard-based rack-scale networking capabilities such as UALoE, UAL, and UEC.

AMD’s own performance positioning also suggests a major step forward. The company’s metrics peg the MI400 generation at up to 40 PFLOPs (FP4) and 20 PFLOPs (FP8), roughly doubling compute capability versus the MI350 series that’s already popular in AI data centers. Memory is an even bigger story: AMD is moving to HBM4, increasing capacity from 288GB of HBM3e to 432GB of HBM4, a 50% uplift. Bandwidth is projected to jump to 19.6 TB/s, more than double the 8 TB/s level cited for MI350. AMD also references a 300 GB/s scale-out bandwidth per GPU, pointing to stronger multi-node performance for large inference clusters and distributed training jobs.

AMD is clearly aiming to be viewed as a direct alternative to NVIDIA at the top end, positioning MI450 against NVIDIA’s Vera Rubin generation with claims such as 1.5x memory capacity, comparable memory bandwidth, comparable FP4/FP8 throughput, comparable scale-up bandwidth, and 1.5x scale-out bandwidth.

The broader MI400 family is also expected to include more than one configuration, tailored to different data center needs. One model, Instinct MI455X, is geared toward large-scale AI training and inference. Another, MI430X, targets HPC and “sovereign AI” use cases, adding hardware-based FP64 capabilities, hybrid CPU+GPU compute focus, and the same HBM4 memory approach as MI455X.

Looking beyond MI450, AMD also signaled that customer conversations are already extending into the next wave. Su noted strong traction across the roadmap—mentioning MI355, calling out MI450 and Helios for large-scale deployments, and adding that many customers are actively engaged around the MI500 series as well. That early pull from customers is important, because it suggests planning cycles for future AI data centers are already factoring in AMD’s next-generation accelerators.

AMD plans to share more about its next-gen Instinct GPUs, EPYC processors, and the Helios RackScale platform at its Advancing AI event in July. With MI450 sampling now in motion and production shipments slated for the second half of 2026, AMD’s AI data center push appears to be accelerating—especially in inference, where the company expects the largest real-world deployments as AI agents and production AI services continue scaling up.