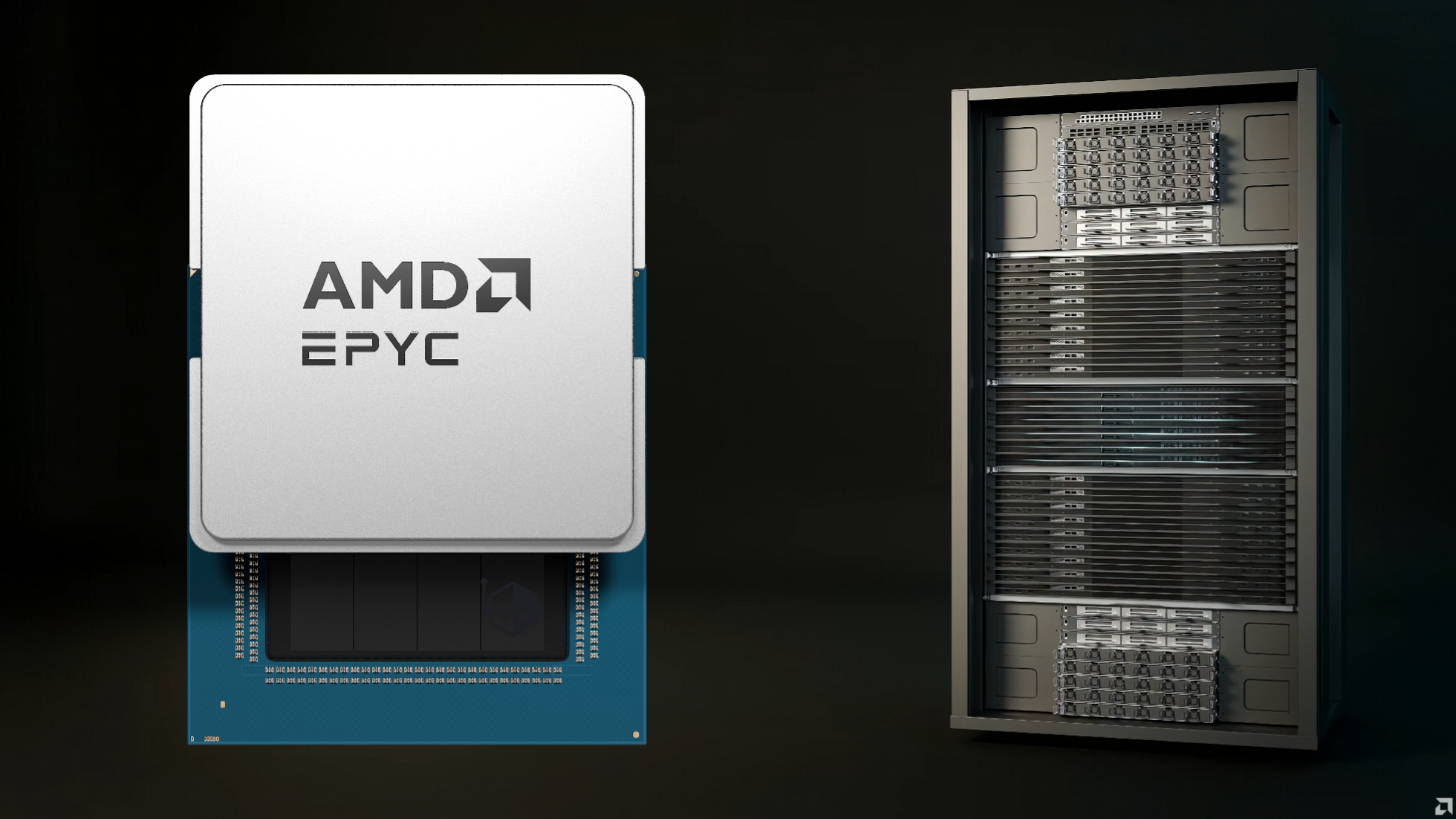

AMD is stepping into the 2nm era in a big way, giving the public an early look at what it says are the world’s first 2nm data center chips: the next-generation EPYC Venice CPU based on the Zen 6 family, and the Instinct MI455X accelerator. Both parts are designed to serve as the computing foundation for AMD’s upcoming Helios AI Rack, a platform aimed squarely at high-performance, large-scale AI training and inference.

The Helios AI Rack itself is built as a fully liquid-cooled system, reflecting how quickly power density and thermal demands are rising in modern AI infrastructure. In its showcased configuration, the rack pairs a single EPYC Venice “Zen 6” CPU with four Instinct MI455X GPUs. For data movement, networking, and interconnect duties, the design also integrates AMD’s Pensando Salina 400 DPU alongside the Pensando Vulcano 800 AI NIC, highlighting a growing emphasis on offloading and high-throughput connectivity in AI clusters.

On the CPU side, AMD’s EPYC Venice is positioned as a major leap for server computing. The company notes that each Zen 6-based CPU can scale up to 256 cores using the Zen 6C approach, targeting dense, efficient throughput for data center workloads. Helios is also presented as a platform that can scale to huge overall system resources, with AMD citing up to 4,600 CPU cores and 18,000 GPU cores within a rack-scale configuration, depending on how the platform is deployed.

The GPU side is equally ambitious. The Instinct MI455X is described as packing several thousand compute units, built to push AI compute capabilities to a new tier. AMD’s Helios rack is claimed to scale up to 2.9 exaflops of AI compute, paired with massive memory and bandwidth figures including up to 31 TB of HBM4 memory and as much as 43 TB/s of scale-out bandwidth—key metrics for keeping large AI models fed with data and minimizing bottlenecks across multi-GPU, multi-node deployments.

AMD also showcased the physical design philosophy behind these parts. The MI455X, in particular, is described as a towering multi-die package featuring two large graphics compute dies (GCDs), two memory controller dies (MCDs), and a total of 16 HBM4 memory sites. It’s the kind of “bigger than big” silicon package that underscores how advanced packaging and chiplet strategies are now central to AI hardware progress.

The EPYC Venice chiplet layout was also highlighted, with AMD pointing to a design that includes eight Zen 6C CCDs (core complex dies) and two I/O dies, plus additional smaller chiplets that handle management controller duties. This type of modular approach is becoming increasingly important as manufacturers balance performance scaling, yields, power efficiency, and platform flexibility at cutting-edge process nodes like 2nm.

Beyond Helios, AMD says it’s preparing a broader data center portfolio built around EPYC Venice processors, Instinct MI400-series accelerators, and the Vulcano interconnect ecosystem. The planned lineup includes a 72-GPU rack approach for hyperscale AI deployments, an 8-GPU enterprise AI configuration using Instinct MI440X accelerators, and a hybrid compute offering that pairs EPYC Venice-X CPUs featuring updated 3D V-Cache with MI430X GPUs optimized for FP64 performance—an indicator that AMD is targeting not only AI, but also traditional HPC and mixed workloads that still depend heavily on double-precision compute.

With these first looks at 2nm EPYC Venice “Zen 6” and Instinct MI455X, AMD is signaling that its next wave of data center platforms will focus on rack-scale AI compute, high-bandwidth memory expansion through HBM4, and faster, smarter networking and data movement. For enterprises and hyperscalers searching for more performance per watt, higher density, and smoother scaling across massive GPU counts, Helios and the chips behind it are shaping up to be a major milestone in AMD’s roadmap.