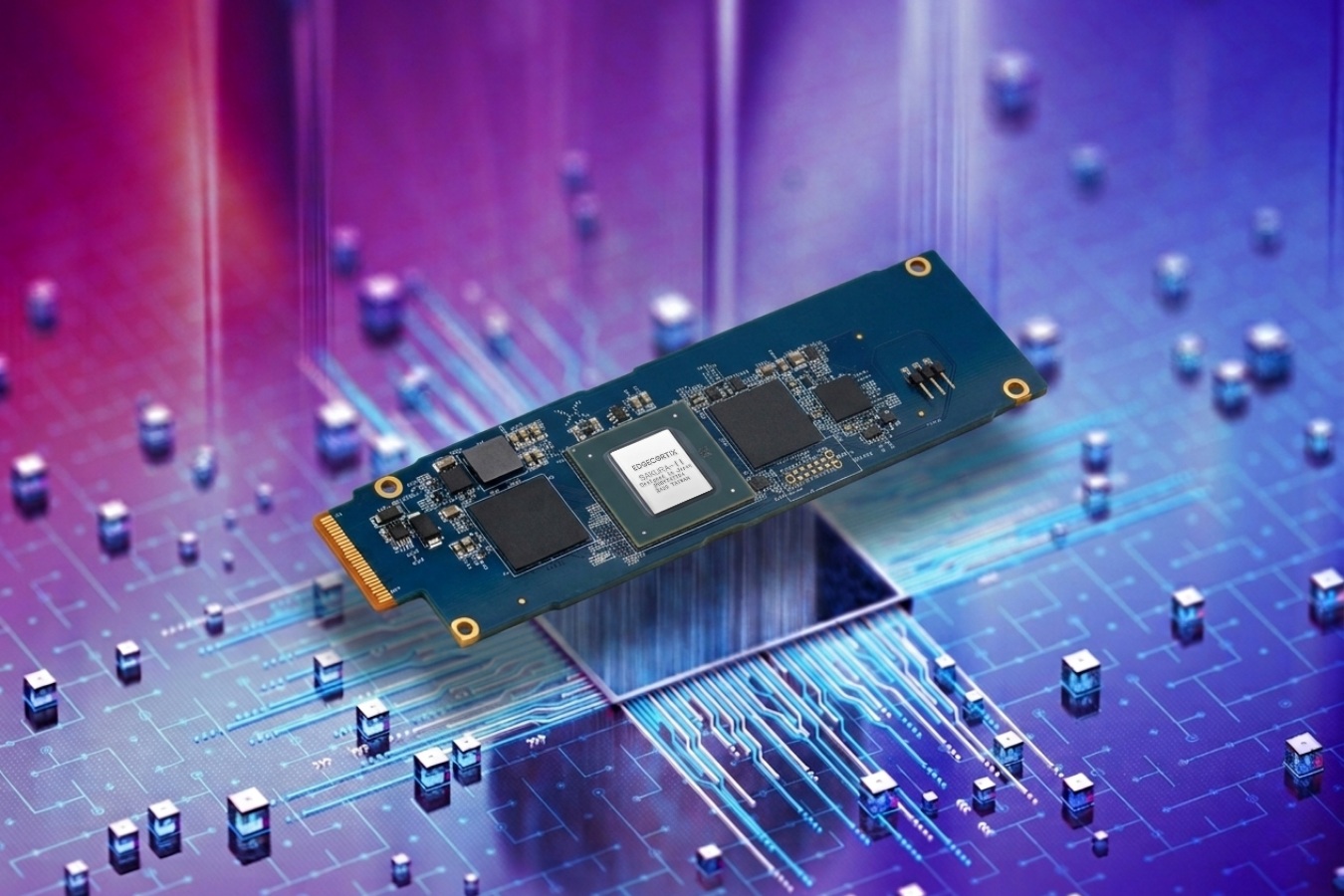

Unigen is jumping into the fast-growing world of local, on-device AI with a compact accelerator that fits where most people would never expect: a standard SSD-style slot. The company has introduced the Amaretti E1.S AI module, a tiny add-in card designed to work in M.2-compatible and E1.S connections, turning unused expansion slots in PCs, desktops, laptops, and servers into dedicated AI compute.

At the core of the Amaretti module is the SAKURA-II AI accelerator from EdgeCortix, a chip built specifically for low-power AI deployments. Despite its small footprint, the accelerator is rated for up to 60 TOPS of INT8 performance and up to 30 TFLOPS of BF16 compute. It also includes a dual 64-bit LPDDR4x memory controller and 20MB of on-chip SRAM cache. Power draw is reported at roughly 8–10W, making it a practical option for always-on AI tasks without the heat and power requirements typically associated with larger accelerators.

Unigen’s approach is straightforward but compelling: place the SAKURA-II accelerator onto an E1.S board and pair it with substantial onboard memory. The Amaretti E1.S AI module is offered in 16GB and 32GB versions, with bandwidth reaching up to 68GB/s. With a 10W rating, that works out to around 6 TOPS per watt, positioning it as an efficiency-focused accelerator for real-world inference and edge workloads.

The 32GB configuration is the key to what makes this module especially interesting for local generative AI. Unigen says the memory capacity allows it to run large language models up to 20B parameters, which is a meaningful range for on-device assistants, private AI workflows, and agentic AI tasks where latency, cost, or data privacy make cloud processing less appealing. Another benefit is scalability: these modules can be deployed in multiple slots, allowing systems with several M.2 connections to stack AI capability without moving to bulky, power-hungry hardware.

Compatibility and software support are also critical for adoption, and Unigen notes that the module works with many widely used AI frameworks and ecosystems, including TensorFlow, PyTorch, ONNX, and Hugging Face. That should make it easier for developers and organizations to integrate the module into existing inference pipelines rather than building a custom stack from scratch.

Unigen is also highlighting broader deployment advantages for server environments, including claims about high inference scalability in air-cooled dual CPU servers, reduced power usage compared to some alternative approaches, and lead times that may be shorter than what buyers often see when sourcing high-demand GPU server hardware. Each module supports up to 32GB of memory, and the unit ships with a pre-installed heatsink to simplify installation and cooling.

Pricing hasn’t been shared yet, but given the combination of specialized acceleration hardware and up to 32GB of onboard memory, it’s reasonable to expect the Amaretti E1.S AI module to target professional users, labs, integrators, and businesses building efficient local AI systems. For anyone with spare M.2 slots who wants to bring AI workloads closer to the device, this SSD-sized NPU module adds a new and surprisingly practical option to the growing on-device AI hardware market.