AI’s insatiable appetite for compute is pushing the limits of Earth-bound infrastructure—and the next frontier may literally be off-planet. After public interest from tech leaders like Jeff Bezos and Eric Schmidt, Elon Musk has confirmed that SpaceX is actively exploring orbital data centers, with the company’s next-generation Starlink V3 satellites serving as the core platform.

Responding on social media, Musk said SpaceX “will be doing this,” pointing to a path where Starlink’s laser-linked satellite network evolves from delivering global internet to powering cloud computing in space. The concept taps a compelling advantage: virtually limitless solar energy in orbit, without the land use, water consumption, and local environmental impacts that come with traditional data centers on Earth.

Skeptics, of course, see major hurdles ahead. Building and maintaining large-scale compute platforms in space raises tough questions about cost, reliability, power management, thermal control, radiation hardening, and long-term servicing. Still, SpaceX’s track record makes it hard to dismiss the idea outright. Starlink itself was widely doubted early on; today it connects millions of users worldwide.

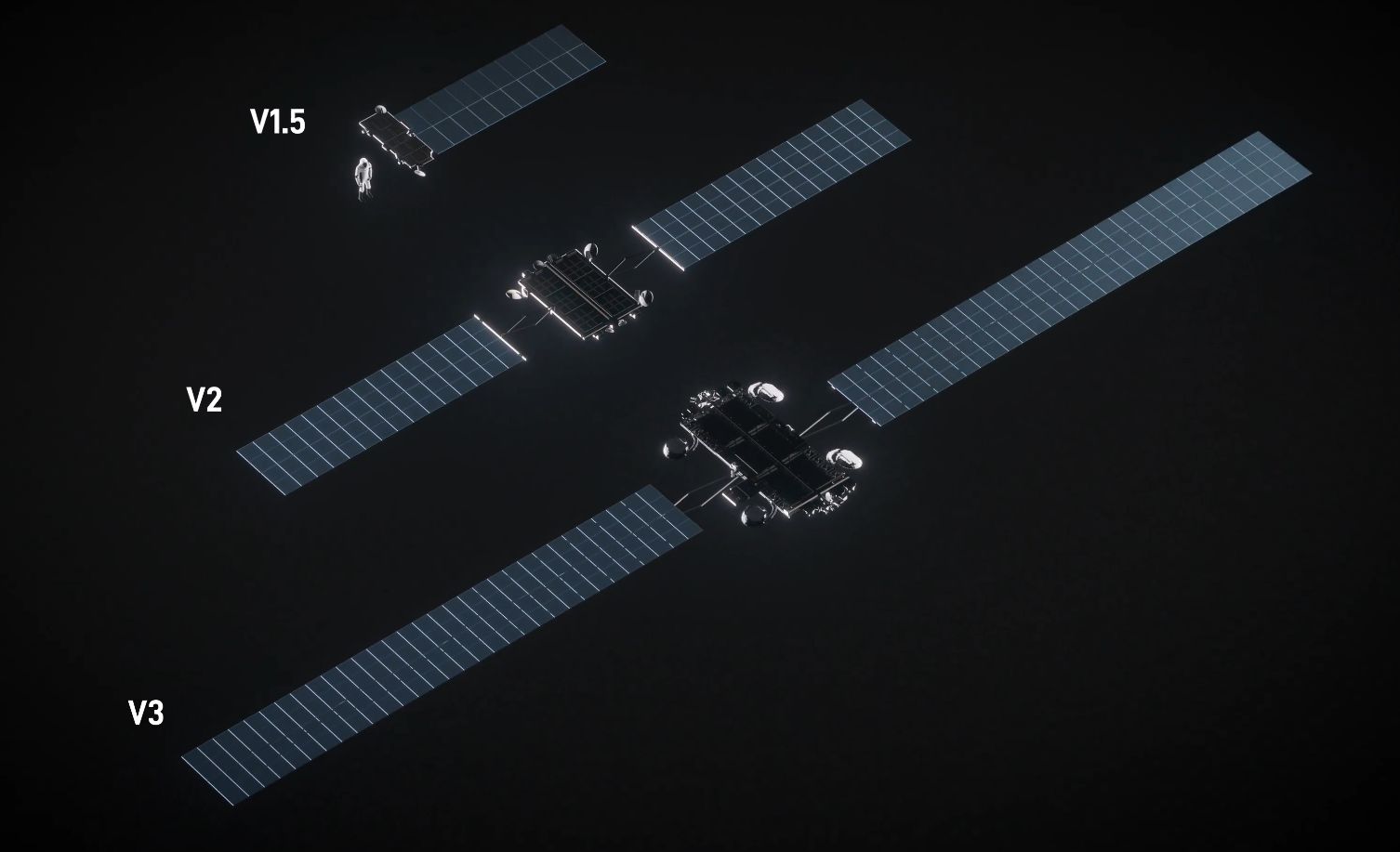

The hardware foundation for this vision is the Starlink V3 satellite. While the current V2 mini generation tops out around 100 Gbps, V3 is expected to push to roughly 1 Tbps per satellite, thanks in part to high-speed optical inter-satellite links. That puts it in the same class as Boeing’s Viasat-3 in raw throughput—a system that required many years and major investment to bring to life. SpaceX aims to mass-deploy V3 hardware at a cadence no one else can match, targeting as many as 60 high-capacity satellites per Starship launch as soon as 2026.

That scale is what could make orbital cloud viable. According to industry analysts like Caleb Henry at Quilty Space, no other satellite program currently comes close to the aggregate capacity SpaceX could loft in a single flight. If even a fraction of that bandwidth is dedicated to compute-related backhaul and interconnects, it would lay the groundwork for a distributed, low-latency orbital network capable of handling AI workloads, data preprocessing, content delivery, and edge inference near where data is generated.

The promise is enticing:

– Abundant solar power above the clouds, day after day

– Global coverage using laser-linked satellites for high-speed data transfer

– Reduced land, water, and permitting constraints compared to terrestrial hyperscale facilities

– Potential resilience for critical services via off-world redundancy

But challenges remain significant:

– Launch costs and on-orbit assembly for large compute payloads

– Heat dissipation and thermal management in vacuum

– Radiation tolerance for servers and storage

– Debris mitigation, servicing, and lifecycle management

– Regulatory and data sovereignty considerations

– End-to-end latency for interactive workloads

In the near term, an orbital data center may begin as a specialized extension of Earth-based cloud rather than a wholesale replacement—handling tasks like caching, content distribution, AI preprocessing, and space-to-space data routing, with heavy training jobs and sensitive datasets remaining on the ground. Over time, as Starship flight rates increase and satellite capacity scales, the line between terrestrial and orbital compute could blur.

SpaceX has repeatedly used manufacturing scale, rapid iteration, and vertical integration to shift what’s possible in space infrastructure. If the company applies the same model to orbital computing—leveraging terabit-class Starlink V3 satellites, optical mesh networking, and bulk deployment via Starship—the leap from concept to capability may arrive faster than expected.

For the broader tech ecosystem, the implications are enormous. Cloud providers, AI labs, and telecom operators are all racing to expand capacity while managing costs and environmental impact. Orbital data centers won’t solve every problem, but they could become a powerful new tier in the global compute stack—one born above the atmosphere and designed for an AI-first era.