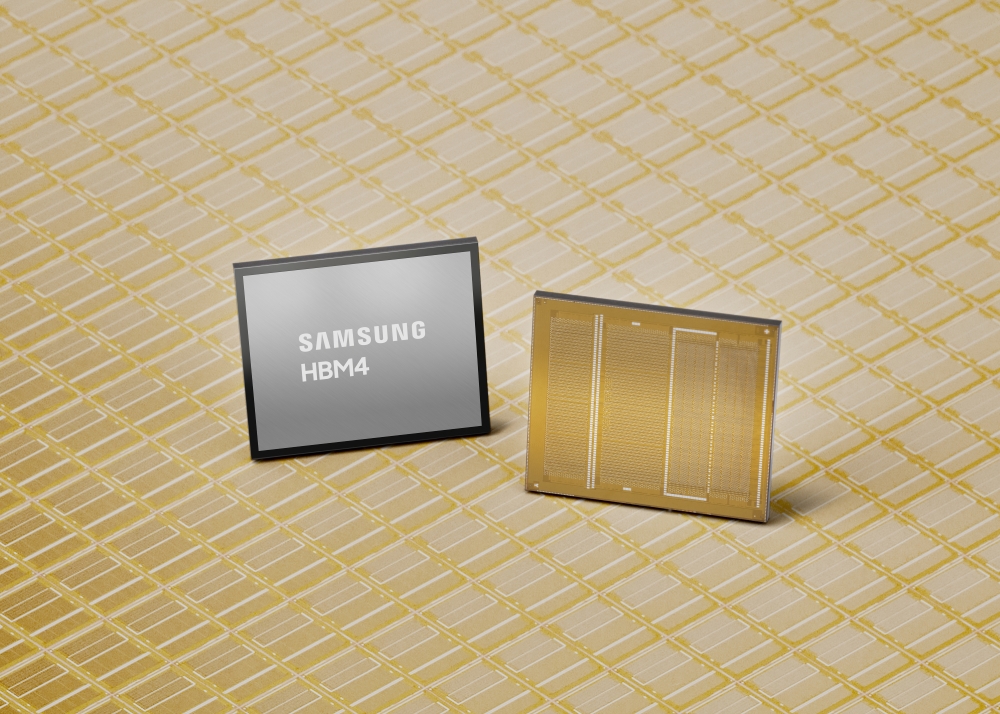

Samsung has officially kicked off mass production and customer shipments of its next-generation HBM4 (High Bandwidth Memory), signaling a major step forward for AI servers, data centers, and high-end GPU workloads that demand extreme memory speed and bandwidth. With this launch, Samsung says it’s taking an early lead in the HBM4 race at a time when demand is accelerating across AI training, inference, and hyperscale computing.

At the core of this rollout is Samsung’s latest 6th-generation 10nm-class DRAM process, referred to as 1c. The company says this process helped it reach stable yields and strong performance right from the start of mass production, without requiring additional redesign work—an important detail for customers that need predictable supply and consistent specifications at scale.

Faster HBM4 speeds to reduce AI bottlenecks

Samsung’s HBM4 is designed to push significantly higher data rates than current mainstream offerings. The company lists a consistent processing speed of 11.7Gbps, which it positions well above an 8Gbps industry baseline. It also represents a noticeable step up compared with the prior HBM3E generation, which topped out at 9.6Gbps pin speed. For customers looking to squeeze out even more performance, Samsung says HBM4 can be tuned up to 13Gbps, aiming to relieve memory bottlenecks that grow as AI models become larger and more complex.

Bandwidth is also getting a big lift. Samsung states that total memory bandwidth per stack can reach up to 3.3TB/s, described as a 2.7x increase over HBM3E. In practical terms, higher bandwidth per stack can help GPUs and AI accelerators stay fed with data, improving throughput and reducing idle compute time—especially in bandwidth-heavy AI and HPC workloads.

Capacity scales from 24GB to 48GB per stack

Capacity options are another headline feature. Using 12-layer stacking, Samsung plans to offer HBM4 in 24GB to 36GB configurations. Looking ahead, 16-layer stacking is expected to expand the lineup up to 48GB per stack, giving system designers more flexibility to hit higher total memory capacities without increasing the number of stacks. That can be an advantage in dense accelerator deployments where space, routing, and power budgets are tight.

Power efficiency and thermals improved for next-gen accelerators

Higher performance in HBM typically comes with tougher power and heat challenges, and Samsung points directly to a key change: the number of data I/Os doubling from 1,024 to 2,048 pins. To address this, the company says it integrated low-power design solutions into the core die and improved efficiency through low-voltage TSV (through-silicon via) technology alongside PDN (power distribution network) optimization.

Samsung claims HBM4 delivers a 40% improvement in power efficiency compared to HBM3E, along with a 10% enhancement in thermal resistance and 30% better heat dissipation. These improvements matter in real deployments because they can help data centers manage power draw, cooling requirements, and reliability—often major factors in total cost of ownership.

Manufacturing scale, packaging, and supply chain readiness

Samsung is also emphasizing its ability to ramp production and meet rising demand. The company points to its large DRAM manufacturing capacity and dedicated infrastructure as a way to strengthen supply chain resilience as HBM4 adoption grows. It also highlights close coordination between its foundry and memory operations using DTCO (Design Technology Co-Optimization) to improve quality and yields, plus extensive in-house advanced packaging expertise to streamline manufacturing and cut lead times.

On the customer side, Samsung says it is expanding technical collaboration with key partners, including global GPU makers and hyperscalers working on next-generation ASIC designs—an important signal given how tightly modern AI accelerators are co-designed around memory performance and packaging constraints.

HBM outlook: ramping through 2026 and beyond

Looking forward, Samsung expects its total HBM sales to more than triple in 2026 compared to 2025, and says it is proactively expanding HBM4 capacity to keep up. The roadmap doesn’t stop with HBM4 either: the company expects sampling for HBM4E to begin in the second half of 2026, and it anticipates custom HBM samples reaching customers in 2027 based on specific requirements.

With mass production and shipments now underway, Samsung’s HBM4 launch sets the stage for faster, higher-capacity, and more power-efficient memory stacks aimed squarely at the next wave of AI GPUs, accelerators, and hyperscale data center platforms.