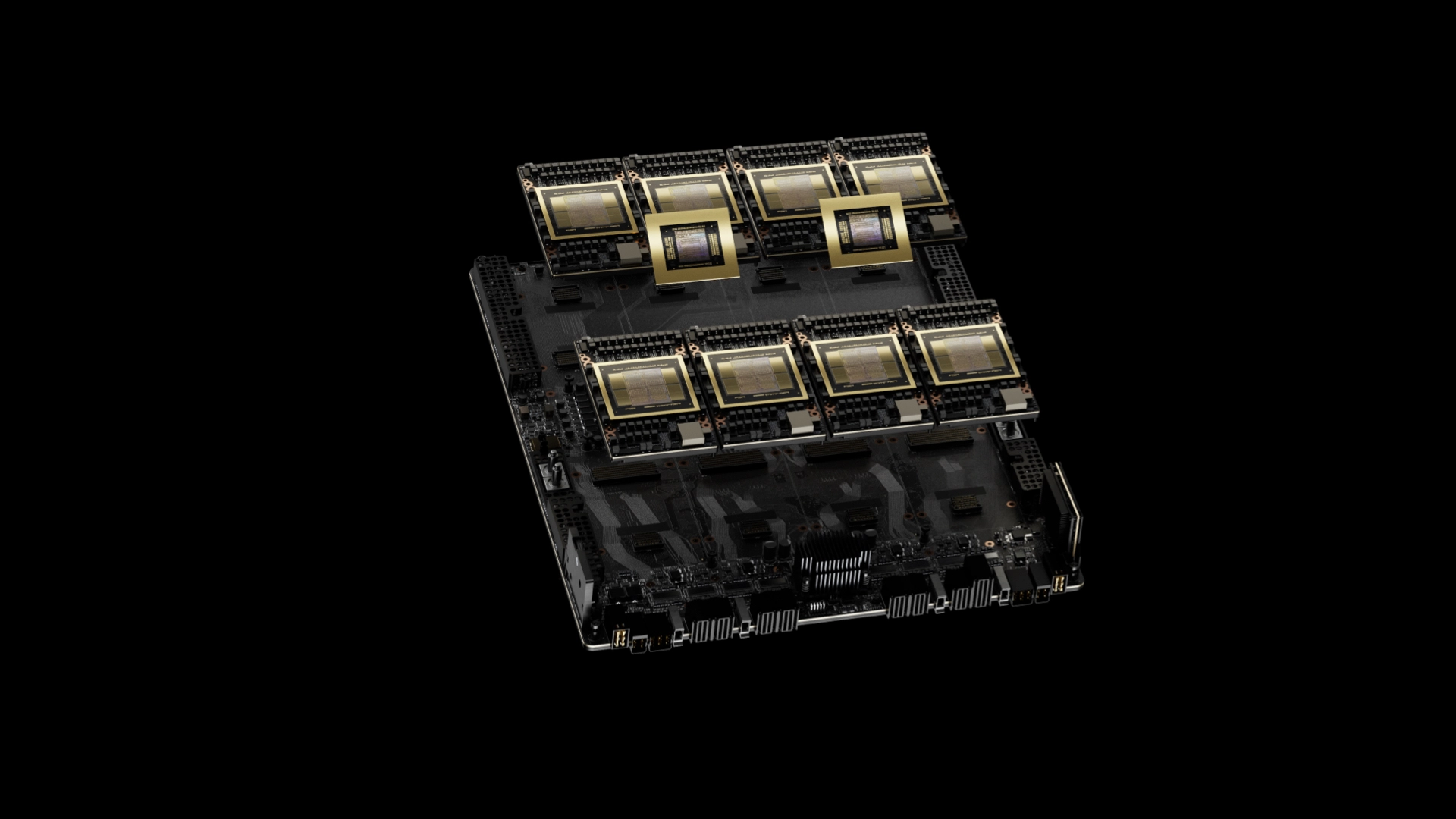

NVIDIA has made a groundbreaking leap in AI performance with its Blackwell architecture, pushing the boundaries of speed and efficiency through strategic optimizations. The company has achieved impressive results with a single DGX B200 node equipped with eight NVIDIA Blackwell GPUs, reaching 1,000 tokens per second (TPS) on Meta’s expansive 400-billion-parameter Llama 4 Maverick model. This accomplishment highlights the significant impact of NVIDIA’s AI ecosystem on the industry.

The configuration allows NVIDIA to potentially achieve 72,000 TPS in a Blackwell server. As highlighted during Jensen’s Computex keynote, companies are now showcasing their AI progress by demonstrating advancements in token output through cutting-edge hardware. NVIDIA’s dedication to this facet is clear.

Breaking through the TPS barriers involved extensive software optimizations using TensorRT-LLM and a speculative decoding draft model, resulting in a fourfold increase in performance. Speculative decoding plays a critical role, involving a faster “draft” model that predicts several tokens ahead, which the larger model then verifies simultaneously. This technique accelerates the inference speed of large language models (LLMs) without sacrificing text quality, allowing for potentially multiple token generations in a single iteration.

NVIDIA employs an EAGLE3-based architecture, designed to enhance LLM inference at the software level rather than through hardware changes. This milestone solidifies NVIDIA’s leadership in the AI field, with Blackwell now finely tuned for handling massive LLMs like the Llama 4 Maverick. This marks a substantial stride towards making AI interactions smoother and quicker.