NVIDIA has taken a significant leap forward with its new Blackwell GPUs, showcasing their prowess in the MLPerf v4.1 AI Training workloads. These GPUs deliver an impressive 2.2 times the performance of their predecessors, the Hopper chips. Blackwell made an impressive debut back in August with AI Inference benchmarks, and now it’s setting new benchmarks in AI Training.

The demand for increased computational power in the AI sector is skyrocketing as new models burst onto the scene. These models require enhanced training and inference capabilities, and NVIDIA is answering that call with Blackwell. The first performance tests have been conducted on some of the most challenging workloads, including Llama 2 70B for fine-tuning, Stable Diffusion for text-to-image tasks, DLRMv2 for recommendations, BERT for natural language processing, RetinaNet for object detection, GPT-3 for pre-training, and R-GAT for graph neural networks.

These diverse workloads are highly regarded for their ability to measure AI Training efficiency, covering an extensive range of applications. They offer precise evaluations in terms of time-to-train metrics, thanks to the support of more than 125 MLCommons members who help align these tests with industry demands.

Since the introduction of Hopper, NVIDIA’s H100 GPUs have improved significantly in long language model (LLM) pre-training, now achieving 1.3 times faster performance per GPU. The Hopper line holds the top spot in AI Training across various benchmarks and boasts the most extensive data center deployments using innovative technologies like NVLink and NVSwitch.

Thanks to ongoing software optimization, the performance of NVIDIA’s Hopper GPUs has continued to increase, offering a remarkable sixfold improvement over the earlier HGX A100 and a 70% boost over HGX H100 submissions in June 2023 for the demanding GPT-3 training.

Now, let’s talk about Blackwell, which represents the future of AI Data Centers. NVIDIA has set records by utilizing its Nyx AI supercomputer, featuring DGX B200 systems. This powerhouse beats Hopper by delivering twice the performance in GPT-3 pre-training and a 2.2 times speedup in Llama 2 fine-tuning.

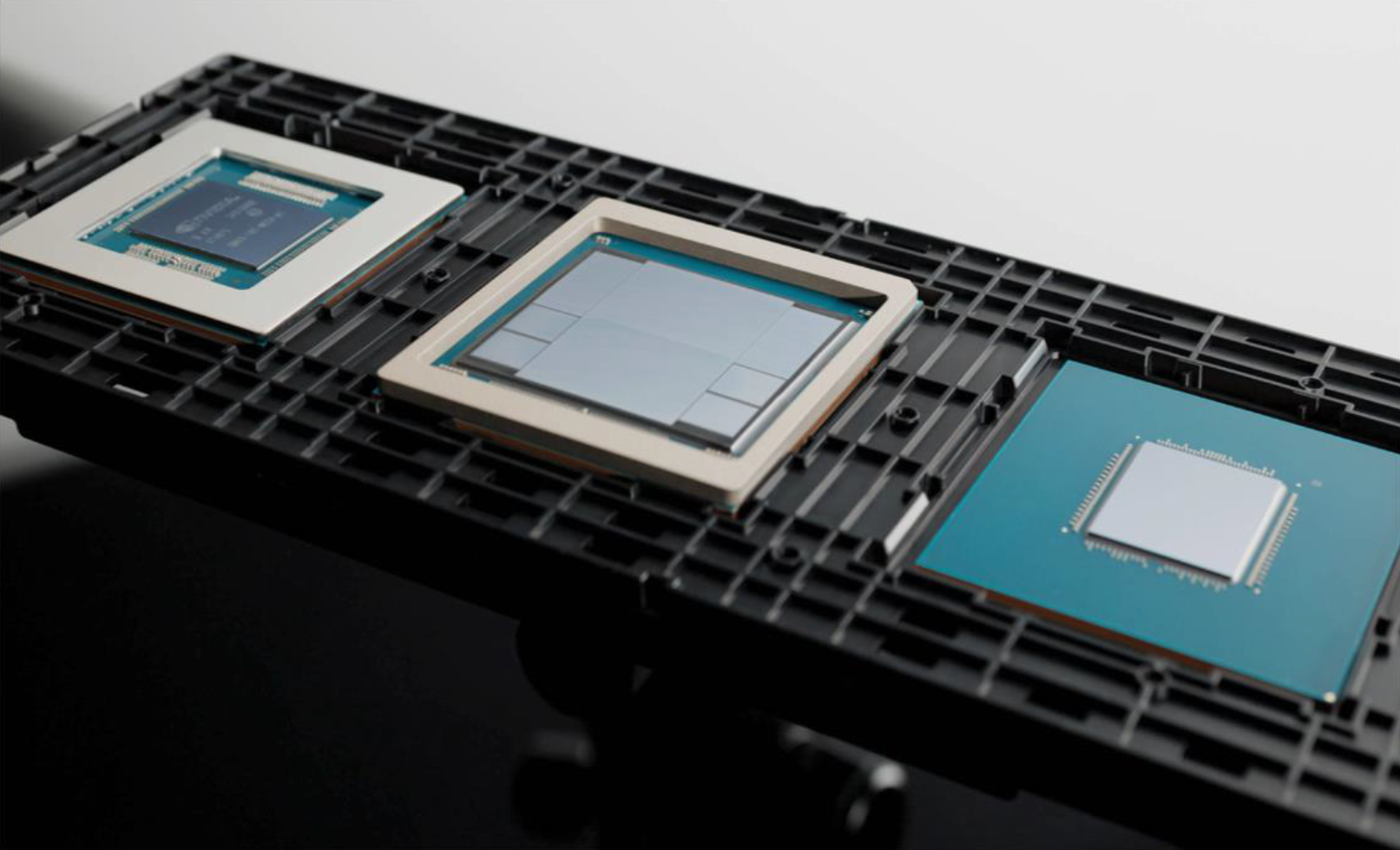

Blackwell introduces cutting-edge technologies unveiled during Hot Chips 2024, and with support from partners, it’s further reinforcing the momentum built around this new generation of GPUs. Notably, the architecture includes optimized math operations, like matrix multiplies, that improve efficiency and enhance deep learning algorithms.

The standout feature of Blackwell is its high bandwidth memory, which allows it to run GPU-intensive benchmarks like GPT-3 on fewer units without sacrificing performance. This means more efficient operations and better resource utilization compared to previous generations.

NVIDIA emphasizes its strategic approach to refining technology rapidly and deploying it at a broad scale, highlighting the company’s ability to provide comprehensive data center solutions. The upcoming Blackwell Ultra promises even greater advancements with enhanced memory and computational capacity set for 2025.

The roadmap continues with Rubin, expected to debut in 2026, offering further enhancements with new memory configurations anticipated by 2027. With Blackwell now in full-scale production, NVIDIA is poised to achieve groundbreaking results both in performance and revenue in the upcoming quarters.