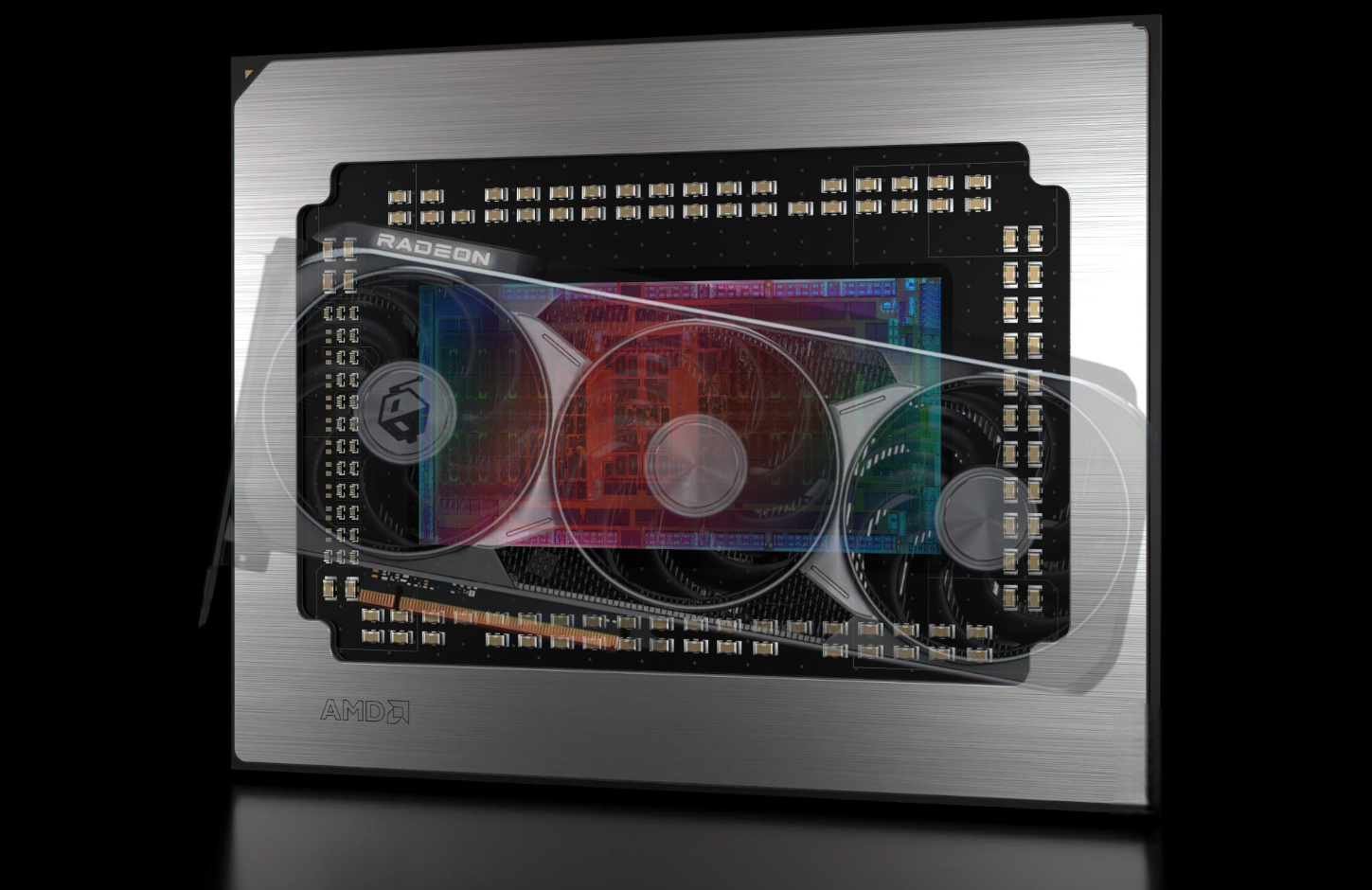

Demand for AI compute has exploded, and it’s pushing startups to chase bigger and better GPUs wherever they can find them. TinyCorp, an AI company known for betting heavily on consumer graphics cards for training and inference, is now floating an especially bold idea: it wants AMD to “go all-in” on RDNA 5 with a massive 96 GB VRAM model priced at around $2,500 per card.

TinyCorp’s vision isn’t just about owning powerful hardware for its own sake. The company has outlined an investor-style pitch built around monetizing GPU compute by selling access through popular AI compute marketplaces. The concept is simple on paper: secure a dedicated facility, fill it with thousands of high-memory GPUs, and turn that into recurring revenue by renting compute for inference and training tasks.

In TinyCorp’s proposed plan, the company aims to raise funding to purchase an $11.5 million building and operate a 5MW power setup in Oregon. The location choice is framed as an economics play, suggesting the region’s power and operating costs could make compute resale more attractive. From there, the startup imagines waiting for AMD’s next-generation RDNA 5 GPUs, then preordering roughly 3,000 cards—ideally negotiated down to that $2,500 per-unit target. The end goal: generate millions in revenue by packaging and selling compute capacity into the growing AI token-driven economy.

The attention-grabbing part is the assumption behind the entire strategy: a consumer-focused RDNA 5 GPU with 96 GB of VRAM will exist, be available in volume, and be affordable enough to buy by the thousands.

Based on currently circulating expectations, RDNA 5 is anticipated to arrive around mid-2027. But even if that timeline holds, jumping to 96 GB on a consumer-class gaming GPU would be a huge leap. VRAM capacity has already been a contentious issue in recent GPU cycles, and scaling all the way to 96 GB would require major memory decisions, higher board complexity, and substantially higher costs—especially in a world where memory supply constraints and broader DRAM/GDDR availability issues can impact pricing and volume.

Realistically, if a 96 GB AMD GPU does show up, it’s far more likely to appear under a workstation or professional product line rather than as a mainstream consumer card. That’s where large VRAM configurations typically make the most sense, both technically and financially, because professional buyers expect higher prices and specialized capabilities.

TinyCorp also tossed out an alternate path: if AMD doesn’t ship a ready-made 96 GB RDNA 5 product, the company claims it could build its own board around RDNA 5 silicon. It even speculates that RDNA 5 could use GDDR7 on a wide memory bus, and that high-density memory modules could make the big VRAM number possible—at least in theory.

It’s worth noting that today, the clearest example of a 96 GB GPU sits firmly in the professional category, where pricing can land in the $8,000 to $10,000 range for a single card. That comparison is exactly why TinyCorp’s $2,500 target reads more like a wish than a forecast. Between cutting-edge silicon, premium memory capacity, and the realities of supply, a 96 GB next-gen GPU at that price would be extremely difficult to imagine—especially at the scale of 3,000 units.

Still, the broader takeaway is more interesting than the exact numbers. TinyCorp is highlighting a real market pressure: smaller AI companies continue looking for alternatives to expensive enterprise accelerators, and consumer GPUs remain tempting because they’re more accessible and can be deployed quickly—when supply allows. Whether or not a 96 GB RDNA 5 GPU ever arrives, the race for affordable, high-memory compute is only getting more intense, and startups will keep pushing chipmakers to deliver bigger configurations at lower prices.