In an exciting stride towards the future of data center technology, SK hynix has made waves with its latest advancements in memory and storage solutions. Just months after the mass production announcement of their 12-layer HBM3e memory, SK hynix has unveiled a groundbreaking 16-layer version. What does this mean for the tech world? The leap from an 8-layer configuration to this new frontier potentially doubles the storage capacity utilized in state-of-the-art AI accelerators, such as those produced by industry giants AMD and NVIDIA.

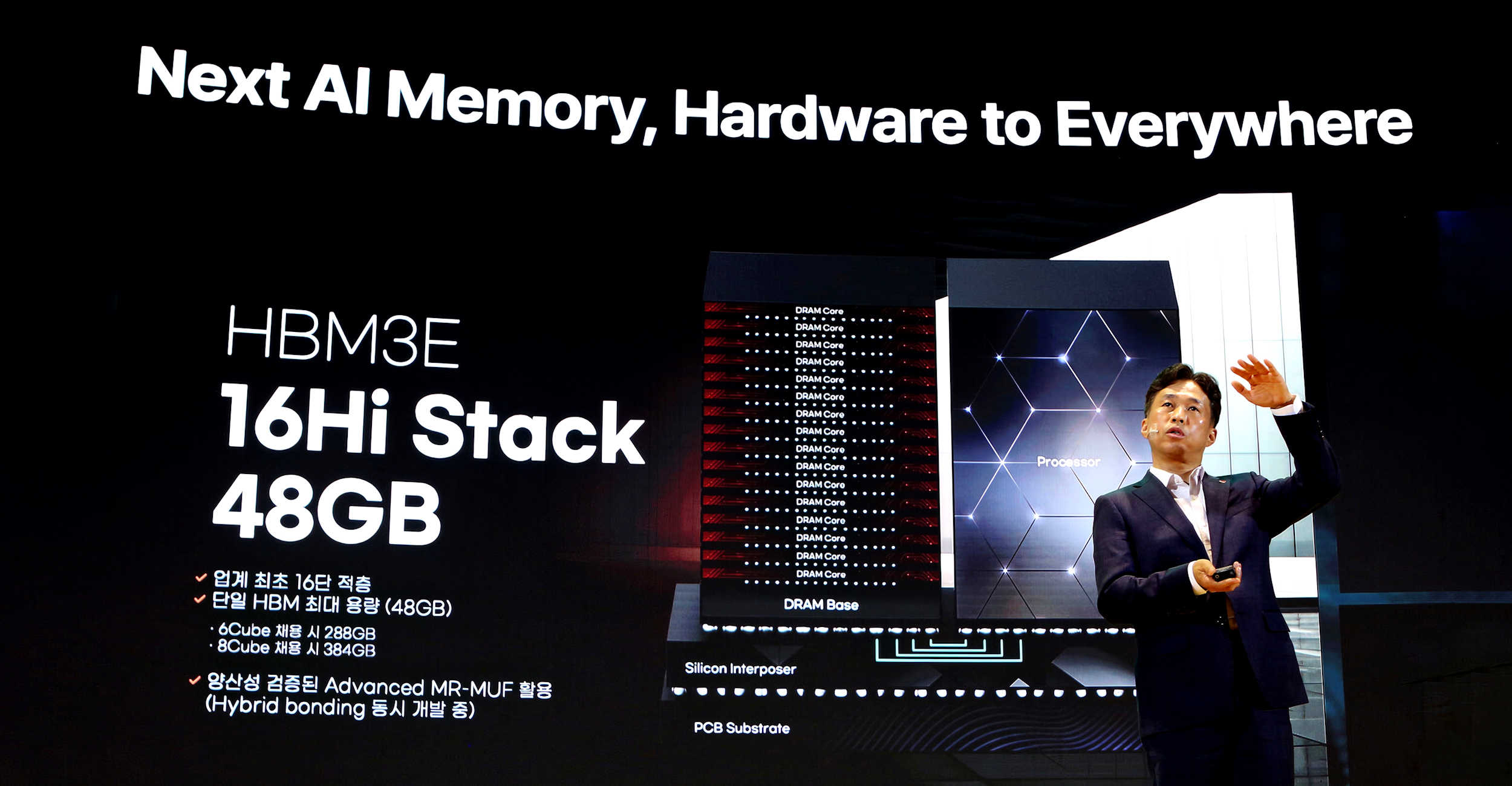

With the 16-layer HBM3 configuration, each stack can now hold up to a whopping 48GB, clearly showcasing SK hynix’s ambition to position itself as a leader in AI memory solutions. This new rendition of memory technology doesn’t just boast increased capacity; it also promises an 18% boost in training speed and a significant 32% jump in inference efficiency compared to its 12-layer predecessor.

At the recently concluded SK AI Summit, the company revealed its forward-looking plans, including the development of next-level HBM4 memory that will also utilize 16-high stacks. Additionally, SK hynix is delving into innovation with LPCAMM2 and LPDDR6 memory modules and is already engineering the next generation of PCIe 6.0 SSDs.

CEO Kwak Noh-Jung provided insights into SK hynix’s strategy for staying ahead in the competitive tech landscape. By pioneering “World First” products, SK hynix aims to be at the forefront of “Beyond Best” solutions with superior industry standards and innovative approaches optimized for the AI era.

As the market gears up for the debut of 16-high HBM technologies, expected to expand with HBM4’s introduction, SK hynix is poised to solidify its position. The company’s dedication to technological stability is evident as it aims to distribute samples of its 48GB 16-high HBM3e next year, already utilizing the Advanced MR-MUF process that proved successful in producing the 12-high products.

Furthermore, SK hynix is setting the stage for a paradigm shift in AI memory systems. The company is developing a range of products tailored to meet customer demands for varying capacities and enhanced performance. In an era where AI systems require enormous memory capacities, SK hynix is working on innovations like CXL Fabrics, which promise ultra-high capacity with low energy consumption in compact spaces.

Breaking the so-called “memory wall” is another frontier SK hynix is tackling. By integrating computational functions into memory, through technologies like Processing near Memory (PNM) and Processing in Memory (PIM), SK hynix is laying the groundwork for a transformative future in AI systems. These efforts are expected to redefine the structure and direction of AI technology, ushering in an era of unprecedented advancement.

These advancements represent a monumental step forward in the memory landscape, promising to revolutionize the way AI systems operate and ensuring SK hynix remains a pivotal player in the rapidly evolving tech industry.